### _Why are the changes needed?_

#### 1. know about this pr

When we execute flink(1.17+) test case, it may throw exception when the test case is `show/stop job`, the exception desc like this

```

- execute statement - show/stop jobs *** FAILED ***

org.apache.kyuubi.jdbc.hive.KyuubiSQLException: Error operating ExecuteStatement: org.apache.flink.table.gateway.service.utils.SqlExecutionException: Could not stop job 4dece26857fab91d63fad1abd8c6bdd0 with savepoint for operation 9ed8247a-b7bd-4004-875b-61ba654ab3dd.

at org.apache.flink.table.gateway.service.operation.OperationExecutor.lambda$callStopJobOperation$11(OperationExecutor.java:628)

at org.apache.flink.table.gateway.service.operation.OperationExecutor.runClusterAction(OperationExecutor.java:716)

at org.apache.flink.table.gateway.service.operation.OperationExecutor.callStopJobOperation(OperationExecutor.java:601)

at org.apache.flink.table.gateway.service.operation.OperationExecutor.executeOperation(OperationExecutor.java:434)

at org.apache.flink.table.gateway.service.operation.OperationExecutor.executeStatement(OperationExecutor.java:195)

at org.apache.kyuubi.engine.flink.operation.ExecuteStatement.executeStatement(ExecuteStatement.scala:64)

at org.apache.kyuubi.engine.flink.operation.ExecuteStatement.runInternal(ExecuteStatement.scala:56)

at org.apache.kyuubi.operation.AbstractOperation.run(AbstractOperation.scala:171)

at org.apache.kyuubi.session.AbstractSession.runOperation(AbstractSession.scala:101)

at org.apache.kyuubi.session.AbstractSession.$anonfun$executeStatement$1(AbstractSession.scala:131)

at org.apache.kyuubi.session.AbstractSession.withAcquireRelease(AbstractSession.scala:82)

at org.apache.kyuubi.session.AbstractSession.executeStatement(AbstractSession.scala:128)

at org.apache.kyuubi.service.AbstractBackendService.executeStatement(AbstractBackendService.scala:67)

at org.apache.kyuubi.service.TFrontendService.ExecuteStatement(TFrontendService.scala:252)

at org.apache.kyuubi.shade.org.apache.hive.service.rpc.thrift.TCLIService$Processor$ExecuteStatement.getResult(TCLIService.java:1557)

at org.apache.kyuubi.shade.org.apache.hive.service.rpc.thrift.TCLIService$Processor$ExecuteStatement.getResult(TCLIService.java:1542)

at org.apache.kyuubi.shade.org.apache.thrift.ProcessFunction.process(ProcessFunction.java:39)

at org.apache.kyuubi.shade.org.apache.thrift.TBaseProcessor.process(TBaseProcessor.java:39)

at org.apache.kyuubi.service.authentication.TSetIpAddressProcessor.process(TSetIpAddressProcessor.scala:36)

at org.apache.kyuubi.shade.org.apache.thrift.server.TThreadPoolServer$WorkerProcess.run(TThreadPoolServer.java:286)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1[149](https://github.com/apache/kyuubi/actions/runs/6649714451/job/18068699087?pr=5501#step:8:150))

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:750)

Caused by: java.util.concurrent.ExecutionException: java.util.concurrent.CompletionException: java.util.concurrent.CompletionException: org.apache.flink.runtime.checkpoint.CheckpointException: Checkpoint triggering task Source: tbl_a[6] -> Sink: tbl_b[7] (1/1) of job 4dece26857fab91d63fad1abd8c6bdd0 is not being executed at the moment. Aborting checkpoint. Failure reason: Not all required tasks are currently running.

at java.util.concurrent.CompletableFuture.reportGet(CompletableFuture.java:357)

at java.util.concurrent.CompletableFuture.get(CompletableFuture.java:1928)

at org.apache.flink.table.gateway.service.operation.OperationExecutor.lambda$callStopJobOperation$11(OperationExecutor.java:617)

... 22 more

Caused by: java.util.concurrent.CompletionException: java.util.concurrent.CompletionException: org.apache.flink.runtime.checkpoint.CheckpointException: Checkpoint triggering task Source: tbl_a[6] -> Sink: tbl_b[7] (1/1) of job 4dece26857fab91d63fad1abd8c6bdd0 is not being executed at the moment. Aborting checkpoint. Failure reason: Not all required tasks are currently running.

at java.util.concurrent.CompletableFuture.encodeRelay(CompletableFuture.java:326)

at java.util.concurrent.CompletableFuture.completeRelay(CompletableFuture.java:338)

at java.util.concurrent.CompletableFuture.uniRelay(CompletableFuture.java:925)

at java.util.concurrent.CompletableFuture$UniRelay.tryFire(CompletableFuture.java:913)

at java.util.concurrent.CompletableFuture.postComplete(CompletableFuture.java:488)

at java.util.concurrent.CompletableFuture.completeExceptionally(CompletableFuture.java:1990)

at org.apache.flink.runtime.rpc.akka.AkkaInvocationHandler.lambda$invokeRpc$1(AkkaInvocationHandler.java:260)

at java.util.concurrent.CompletableFuture.uniWhenComplete(CompletableFuture.java:774)

at java.util.concurrent.CompletableFuture$UniWhenComplete.tryFire(CompletableFuture.java:750)

at java.util.concurrent.CompletableFuture.postComplete(CompletableFuture.java:488)

at java.util.concurrent.CompletableFuture.completeExceptionally(CompletableFuture.java:1990)

at org.apache.flink.util.concurrent.FutureUtils.doForward(FutureUtils.java:1298)

at org.apache.flink.runtime.concurrent.akka.ClassLoadingUtils.lambda$null$1(ClassLoadingUtils.java:93)

at org.apache.flink.runtime.concurrent.akka.ClassLoadingUtils.runWithContextClassLoader(ClassLoadingUtils.java:68)

at org.apache.flink.runtime.concurrent.akka.ClassLoadingUtils.lambda$guardCompletionWithContextClassLoader$2(ClassLoadingUtils.java:92)

at java.util.concurrent.CompletableFuture.uniWhenComplete(CompletableFuture.java:774)

at java.util.concurrent.CompletableFuture$UniWhenComplete.tryFire(CompletableFuture.java:750)

at java.util.concurrent.CompletableFuture.postComplete(CompletableFuture.java:488)

at java.util.concurrent.CompletableFuture.completeExceptionally(CompletableFuture.java:1990)

at org.apache.flink.runtime.concurrent.akka.AkkaFutureUtils$1.onComplete(AkkaFutureUtils.java:45)

at akka.dispatch.OnComplete.internal(Future.scala:299)

at akka.dispatch.OnComplete.internal(Future.scala:297)

at akka.dispatch.japi$CallbackBridge.apply(Future.scala:224)

at akka.dispatch.japi$CallbackBridge.apply(Future.scala:221)

at org.apache.kyuubi.operation.AbstractOperation.run(AbstractOperation.scala:171)

...

Cause: java.lang.RuntimeException: org.apache.flink.util.SerializedThrowable:java.util.concurrent.CompletionException: org.apache.flink.runtime.checkpoint.CheckpointException: Checkpoint triggering task Source: tbl_a[6] -> Sink: tbl_b[7] (1/1) of job 4dece26857fab91d63fad1abd8c6bdd0 is not being executed at the moment. Aborting checkpoint. Failure reason: Not all required tasks are currently running.

at java.util.concurrent.CompletableFuture.encodeRelay(CompletableFuture.java:326)

at java.util.concurrent.CompletableFuture.completeRelay(CompletableFuture.java:338)

at java.util.concurrent.CompletableFuture.uniRelay(CompletableFuture.java:925)

at java.util.concurrent.CompletableFuture$UniRelay.tryFire(CompletableFuture.java:913)

at java.util.concurrent.CompletableFuture.postComplete(CompletableFuture.java:488)

at java.util.concurrent.CompletableFuture.completeExceptionally(CompletableFuture.java:1990)

at org.apache.flink.runtime.rpc.akka.AkkaInvocationHandler.lambda$invokeRpc$1(AkkaInvocationHandler.java:260)

at java.util.concurrent.CompletableFuture.uniWhenComplete(CompletableFuture.java:774)

at java.util.concurrent.CompletableFuture$UniWhenComplete.tryFire(CompletableFuture.java:750)

at java.util.concurrent.CompletableFuture.postComplete(CompletableFuture.java:488)

...

Cause: java.lang.RuntimeException: org.apache.flink.util.SerializedThrowable:org.apache.flink.runtime.checkpoint.CheckpointException: Checkpoint triggering task Source: tbl_a[6] -> Sink: tbl_b[7] (1/1) of job 4dece26857fab91d63fad1abd8c6bdd0 is not being executed at the moment. Aborting checkpoint. Failure reason: Not all required tasks are currently running.

at org.apache.flink.runtime.checkpoint.DefaultCheckpointPlanCalculator.checkTasksStarted(DefaultCheckpointPlanCalculator.java:143)

at org.apache.flink.runtime.checkpoint.DefaultCheckpointPlanCalculator.lambda$calculateCheckpointPlan$1(DefaultCheckpointPlanCalculator.java:105)

at java.util.concurrent.CompletableFuture$AsyncSupply.run(CompletableFuture.java:[160](https://github.com/apache/kyuubi/actions/runs/6649714451/job/18068699087?pr=5501#step:8:161)4)

at org.apache.flink.runtime.rpc.akka.AkkaRpcActor.lambda$handleRunAsync$4(AkkaRpcActor.java:453)

at org.apache.flink.runtime.concurrent.akka.ClassLoadingUtils.runWithContextClassLoader(ClassLoadingUtils.java:68)

at org.apache.flink.runtime.rpc.akka.AkkaRpcActor.handleRunAsync(AkkaRpcActor.java:453)

at org.apache.flink.runtime.rpc.akka.AkkaRpcActor.handleRpcMessage(AkkaRpcActor.java:218)

at org.apache.flink.runtime.rpc.akka.FencedAkkaRpcActor.handleRpcMessage(FencedAkkaRpcActor.java:84)

at org.apache.flink.runtime.rpc.akka.AkkaRpcActor.handleMessage(AkkaRpcActor.java:[168](https://github.com/apache/kyuubi/actions/runs/6649714451/job/18068699087?pr=5501#step:8:169))

at akka.japi.pf.UnitCaseStatement.apply(CaseStatements.scala:24)

...

```

#### 2. what make the test case failed?

If we want know the reason about the exception, we need to understand the process of flink executing stop job, the process line like this code space show(it's source is our bad test case, we can use this test case to solve similar problems)

```

1. sql

1.1 create table tbl_a (a int) with ('connector' = 'datagen','rows-per-second'='10')

1.2 create table tbl_b (a int) with ('connector' = 'blackhole')

1.3 insert into tbl_b select * from tbl_a

2. start job: it will get 2 tasks abount source sink

3. show job: we can get job info

4. stop job(the main error):

4.1 stop job need checkpoint

4.2 start checkpoint, it need all task state is running

4.3 checkpoint can not get all task state is running, then throw the exception

```

Actually, in a normal process, it should not throw the exception, if this happens to your job, please check your kyuubi conf `kyuubi.session.engine.flink.max.rows`, it's default value is 1000000. But in the test case, we only the the conf's value is 10.

It's the reason to make the error, this conf makes when we execute a stream query, it will cancel the when the limit is reached. Because flink's datagen is a streamconnector, so we can imagine, when we execute those sql, because our conf, it will make the sink task be canceled because the query reached 10. So when we execute stop job, flink checkpoint cannot get the tasks about this job is all in running state, then flink throw this exception.

#### 3. how can we solve this problem?

When your job makes the same exception, please make sure your kyuubi conf `kyuubi.session.engine.flink.max.rows`'s value can it meet your streaming query needs? Then changes the conf's value.

close#5531

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

### _Was this patch authored or co-authored using generative AI tooling?_

No

Closes#5549 from davidyuan1223/fix_flink_test_bug.

Closes#5531

ce7fd7961 [david yuan] Update externals/kyuubi-flink-sql-engine/src/test/scala/org/apache/kyuubi/engine/flink/operation/FlinkOperationSuite.scala

dc3a4b9ba [davidyuan] fix flink on yarn test bug

86a647ad9 [davidyuan] fix flink on yarn test bug

cbd4c0c3d [davidyuan] fix flink on yarn test bug

8b51840bc [davidyuan] add common method to get session level config

bcb0cf372 [davidyuan] Merge remote-tracking branch 'origin/master'

72e7aea3c [david yuan] Merge branch 'apache:master' into master

57ec746e9 [david yuan] Merge pull request #13 from davidyuan1223/fix

56b91a321 [yuanfuyuan] fix_4186

c8eb9a2c7 [david yuan] Merge branch 'apache:master' into master

2beccb6ca [david yuan] Merge branch 'apache:master' into master

0925a4b6f [david yuan] Merge pull request #12 from davidyuan1223/revert-11-fix_4186

40e80d9a8 [david yuan] Revert "fix_4186"

c83836b43 [david yuan] Merge pull request #11 from davidyuan1223/fix_4186

360d183b0 [david yuan] Merge branch 'master' into fix_4186

b61604442 [yuanfuyuan] fix_4186

e244029b8 [david yuan] Merge branch 'apache:master' into master

bfa6cbf97 [davidyuan1223] Merge branch 'apache:master' into master

16237c2a9 [davidyuan1223] Merge branch 'apache:master' into master

c48ad38c7 [yuanfuyuan] remove the used blank lines

55a0a43c5 [xiaoyuandajian] Merge pull request #10 from xiaoyuandajian/fix-#4057

cb1193576 [yuan] Merge remote-tracking branch 'origin/fix-#4057' into fix-#4057

86e4e1ce0 [yuan] fix-#4057 info: modify the shellcheck errors file in ./bin 1. "$@" is a array, we want use string to compare. so update "$@" => "$*" 2. `tty` mean execute the command, we can use $(tty) replace it 3. param $# is a number, compare number should use -gt/-lt,not >/< 4. not sure the /bin/kyuubi line 63 'exit -1' need modify? so the directory bin only have a shellcheck note in /bin/kyuubi

dd39efdeb [袁福元] fix-#4057 info: 1. "$@" is a array, we want use string to compare. so update "$@" => "$*" 2. `tty` mean execute the command, we can use $(tty) replace it 3. param $# is a number, compare number should use -gt/-lt,not >/<

Lead-authored-by: davidyuan <yuanfuyuan@mafengwo.com>

Co-authored-by: david yuan <51512358+davidyuan1223@users.noreply.github.com>

Co-authored-by: yuanfuyuan <1406957364@qq.com>

Co-authored-by: yuan <yuanfuyuan@mafengwo.com>

Co-authored-by: davidyuan1223 <51512358+davidyuan1223@users.noreply.github.com>

Co-authored-by: xiaoyuandajian <51512358+xiaoyuandajian@users.noreply.github.com>

Co-authored-by: 袁福元 <yuanfuyuan@mafengwo.com>

Signed-off-by: Cheng Pan <chengpan@apache.org>

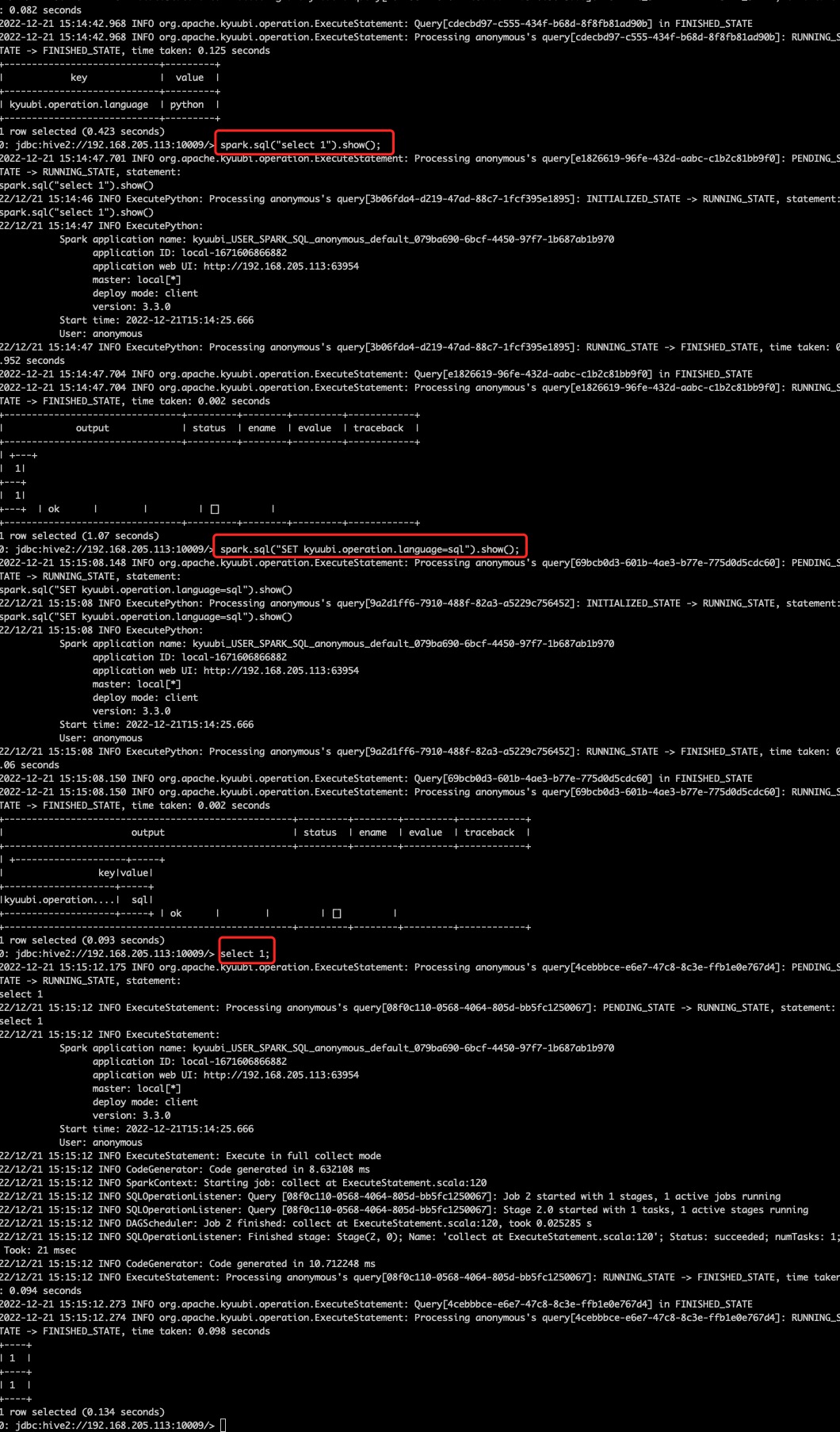

### _Why are the changes needed?_

current version, when set `kyuubi.session.engine.spark.showProgress=true`, it will show stage's progress info,but the info only show stage's detail, now we need to add job info in this, just like

```

[Stage 1:> (0 + 1) / 2]

```

to

```

[Job 1 (0 / 1) Stages] [Stage 1:> (0 + 1) / 2]

```

**this update is useful when user want know their sql execute detail**

closes#4186

### _How was this patch tested?_

- [x] Add screenshots for manual tests if appropriate

**The photo show match log**

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

### _Was this patch authored or co-authored using generative AI tooling?_

No

Closes#5410 from davidyuan1223/improvement_add_job_log.

Closes#4186

d8d03c4c0 [Cheng Pan] Update externals/kyuubi-spark-sql-engine/src/main/scala/org/apache/spark/kyuubi/SQLOperationListener.scala

a06e9a17c [david yuan] Update SparkConsoleProgressBar.scala

854408416 [david yuan] Merge branch 'apache:master' into improvement_add_job_log

963ff18b9 [david yuan] Update SparkConsoleProgressBar.scala

9e4635653 [david yuan] Update SparkConsoleProgressBar.scala

8c04dca7d [david yuan] Update SQLOperationListener.scala

39751bffa [davidyuan] fix

4f657e728 [davidyuan] fix deleted files

86756eba7 [david yuan] Merge branch 'apache:master' into improvement_add_job_log

0c9ac27b5 [davidyuan] add showProgress with jobInfo Unit Test

d4434a0de [davidyuan] Revert "add showProgress with jobInfo Unit Test"

84b1aa005 [davidyuan] Revert "improvement_add_job_log fix"

66126f96e [davidyuan] Merge remote-tracking branch 'origin/improvement_add_job_log' into improvement_add_job_log

228fd9cf3 [davidyuan] add showProgress with jobInfo Unit Test

055e0ac96 [davidyuan] add showProgress with jobInfo Unit Test

e4aac34bd [davidyuan] Merge remote-tracking branch 'origin/improvement_add_job_log' into improvement_add_job_log

b226adad8 [davidyuan] Merge remote-tracking branch 'origin/improvement_add_job_log' into improvement_add_job_log

a08799ca0 [david yuan] Update externals/kyuubi-spark-sql-engine/src/main/scala/org/apache/spark/kyuubi/StageStatus.scala

a991b68c4 [david yuan] Update externals/kyuubi-spark-sql-engine/src/main/scala/org/apache/spark/kyuubi/StageStatus.scala

d12046dac [davidyuan] add showProgress with jobInfo Unit Test

10a56b159 [davidyuan] add showProgress with jobInfo Unit Test

a973cdde6 [davidyuan] improvement_add_job_log fix 1. provide Option[Int] with JobId

e8a510891 [davidyuan] improvement_add_job_log fix 1. provide Option[Int] with JobId

7b9e874f2 [davidyuan] improvement_add_job_log fix 1. provide Option[Int] with JobId

5b4aaa8b5 [davidyuan] improvement_add_job_log fix 1. fix new end line 2. provide Option[Int] with JobId

780f9d15e [davidyuan] improvement_add_job_log fix 1. remove duplicate synchronized 2. because the activeJobs is ConcurrentHashMap, so reduce synchronized 3. fix scala code style 4. change forEach to asScala code style 5. change conf str to KyuubiConf.XXX.key

59340b713 [davidyuan] add showProgress with jobInfo Unit Test

af05089d4 [davidyuan] add showProgress with jobInfo Unit Test

c07535a01 [davidyuan] [Improvement] spark showProgress can briefly output info of the job #4186

d4bdec798 [yuanfuyuan] fix_4186

9fa8e73fc [davidyuan] add showProgress with jobInfo Unit Test

49debfbe3 [davidyuan] improvement_add_job_log fix 1. provide Option[Int] with JobId

5cf8714e0 [davidyuan] improvement_add_job_log fix 1. provide Option[Int] with JobId

249a422b6 [davidyuan] improvement_add_job_log fix 1. provide Option[Int] with JobId

e15fc7195 [davidyuan] improvement_add_job_log fix 1. fix new end line 2. provide Option[Int] with JobId

4564ef98f [davidyuan] improvement_add_job_log fix 1. remove duplicate synchronized 2. because the activeJobs is ConcurrentHashMap, so reduce synchronized 3. fix scala code style 4. change forEach to asScala code style 5. change conf str to KyuubiConf.XXX.key

32ad0759b [davidyuan] add showProgress with jobInfo Unit Test

d30492e46 [davidyuan] add showProgress with jobInfo Unit Test

6209c344e [davidyuan] [Improvement] spark showProgress can briefly output info of the job #4186

56b91a321 [yuanfuyuan] fix_4186

Lead-authored-by: davidyuan <yuanfuyuan@mafengwo.com>

Co-authored-by: davidyuan <davidyuan1223@gmail.com>

Co-authored-by: david yuan <51512358+davidyuan1223@users.noreply.github.com>

Co-authored-by: yuanfuyuan <1406957364@qq.com>

Co-authored-by: Cheng Pan <pan3793@gmail.com>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

Resolve: #5405 Support the Flink 1.18

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

### _Was this patch authored or co-authored using generative AI tooling?_

No

Closes#5465 from YesOrNo828/flink-1.18.

Closes#5405

a0010ca14 [Xianxun Ye] [KYUUBI #5405] [FLINK] Remove flink1.18 rc repo

2a4ae365c [Xianxun Ye] Update .github/workflows/master.yml

d4d458dc7 [Xianxun Ye] [KYUUBI #5405] [FLINK] Update the flink1.18-rc3 repo

99172e3da [Xianxun Ye] [KYUUBI #5405] [FLINK] Using the staging repo during the RC stage

4c0cf887b [Xianxun Ye] [KYUUBI #5405] [FLINK] Using the staging repo during the RC stage

c74f5c31b [Xianxun Ye] [KYUUBI #5405] [FLINK] fixed Pan's comments.

1933ebadd [Xianxun Ye] [KYUUBI #5405] [FLINK] Support Flink 1.18

Authored-by: Xianxun Ye <yesorno828423@gmail.com>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

Refer to spark and flink settings conf, support configure Trino session conf in kyuubi-default.conf

issue : https://github.com/apache/kyuubi/issues/5282

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [ ] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

### _Was this patch authored or co-authored using generative AI tooling?_

No

Closes#5283 from ASiegeLion/kyuubi-master-trino.

Closes#5282

87a3f57b4 [Cheng Pan] Apply suggestions from code review

effdd79f4 [liupeiyue] [KYUUBI #5282]Add trino's session conf to kyuubi-default.xml

399a200f7 [liupeiyue] [KYUUBI #5282]Add trino's session conf to kyuubi-default.xml

7462b32c2 [liupeiyue] [KYUUBI #5282]Add trino's session conf to kyuubi-default.xml--Update documentation

5295f5f94 [liupeiyue] [KYUUBI #5282]Add trino's session conf to kyuubi-default.xml

Lead-authored-by: liupeiyue <liupeiyue@yy.com>

Co-authored-by: Cheng Pan <pan3793@gmail.com>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

To close#5382.

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [ ] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

### _Was this patch authored or co-authored using generative AI tooling?_

No

Closes#5490 from zhuyaogai/issue-5382.

Closes#5382

4757445e7 [Fantasy-Jay] Remove unrelated comment.

f68c7aa6c [Fantasy-Jay] Refactor JDBC engine to reduce to code duplication.

4ad6b3c53 [Fantasy-Jay] Refactor JDBC engine to reduce to code duplication.

Authored-by: Fantasy-Jay <13631435453@163.com>

Signed-off-by: liangbowen <liangbowen@gf.com.cn>

### _Why are the changes needed?_

close [#5449](https://github.com/apache/kyuubi/issues/5449).

Unlike the initial preview release, Delta Spark 3.0.0 is now built on top of Apache Spark™ 3.5.

Delta Spark maven artifact has been renamed from delta-core to delta-spark.

https://github.com/delta-io/delta/releases/tag/v3.0.0

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [ ] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

### _Was this patch authored or co-authored using generative AI tooling?_

No

Closes#5450 from zml1206/5449.

Closes#5449

a7969ed6a [zml1206] bump Delta Lake 3.0.0

Authored-by: zml1206 <zhuml1206@gmail.com>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

The Apache Spark Community found a performance regression with log4j2. See https://github.com/apache/spark/pull/36747.

This PR to fix the performance issue on our side.

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [ ] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

### _Was this patch authored or co-authored using generative AI tooling?_

No.

Closes#5400 from ITzhangqiang/KYUUBI_5365.

Closes#5365

dbb9d8b32 [ITzhangqiang] [KYUUBI #5365] Don't use Log4j2's extended throwable conversion pattern in default logging configurations

Authored-by: ITzhangqiang <itzhangqiang@163.com>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

Replace string literal with constant variable

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [ ] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

### _Was this patch authored or co-authored using generative AI tooling?_

No

Closes#5339 from cxzl25/use_engine_init_timeout_key.

Closes#5339

bef2eaa4a [sychen] fix

Authored-by: sychen <sychen@ctrip.com>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

- Extract common assertion method for verifying file contents

- Ensure integrity of the file by comparing the line count

- Correct the script name for Spark engine KDF doc generation from `gen_kdf.sh` to `gen_spark_kdf_docs.sh`

- Add `gen_hive_kdf_docs.sh` script for Hive engine KDF doc generation

- Fix incorrect hints for Ranger spec file generation

- shows the line number of the incorrect file content

- Streamingly read file content by line with buffered support

- Regeneration hints:

<img width="656" alt="image" src="https://github.com/apache/kyuubi/assets/1935105/d1a7cb70-8b63-4fe9-ae27-80dadbe84799">

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

### _Was this patch authored or co-authored using generative AI tooling?_

No.

Closes#5275 from bowenliang123/doc-regen-hint.

Closes#5275

9af97ab86 [Bowen Liang] implicit source position

07020c74d [liangbowen] assertFileContent

Lead-authored-by: liangbowen <liangbowen@gf.com.cn>

Co-authored-by: Bowen Liang <liangbowen@gf.com.cn>

Signed-off-by: Bowen Liang <liangbowen@gf.com.cn>

### _Why are the changes needed?_

Flink sessions are now managed by Kyuubi, hence disable session timeout from Flink itself.

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

### _Was this patch authored or co-authored using generative AI tooling?_

No.

Closes#5264 from link3280/disable_flink_session_timeout.

Closes#5264

fff5c54d7 [Paul Lin] Force disable Flink's session timeout

Authored-by: Paul Lin <paullin3280@gmail.com>

Signed-off-by: Paul Lin <paullin3280@gmail.com>

### _Why are the changes needed?_

Recover CI.

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

### _Was this patch authored or co-authored using generative AI tooling?_

No

Closes#5258 from pan3793/testcontainers.

Closes#5253

c1c8241af [Cheng Pan] fix

ce4f9ed2c [Cheng Pan] [KYUUBI #5253][FOLLOWUP] Supply testcontainers-scala-scalatest deps for ha module

Authored-by: Cheng Pan <chengpan@apache.org>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

Implement this issue: #5232

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

### _Was this patch authored or co-authored using generative AI tooling?_

No

Closes#5240 from XorSum/always_cancel_job_group.

Closes#5232

7da16aaa7 [bkhan] In SparkOperation#cleanup always calls cancelJobGroup even though it's in the completed state

Authored-by: bkhan <bkhan@trip.com>

Signed-off-by: Cheng Pan <chengpan@apache.org>

Closes#3444

### _Why are the changes needed?_

### _How was this patch tested?_

- [x] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.apache.org/docs/latest/develop_tools/testing.html#running-tests) locally before make a pull request

Closes#3558 from iodone/kyuubi-3444.

Closes#3444

acaa72afe [odone] remove plugin dependency from kyuubi spark engine

739f7dd5b [odone] remove plugin dependency from kyuubi spark engine

1146eb6e0 [odone] kyuubi-3444

Authored-by: odone <odone.zhang@gmail.com>

Signed-off-by: ulyssesyou <ulyssesyou@apache.org>

### _Why are the changes needed?_

The generated application name is not effective in Flink app mode. The PR moves the name generating to the `ProcessBuilder`.

The generated app name would be like `kyuubi_USER_FLINK_SQL_myuser_default_382c0371-8cc1-4aec-90bd-a2acf4de6fac`.

### _How was this patch tested?_

- [x] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

### _Was this patch authored or co-authored using generative AI tooling?_

No.

Closes#5200 from link3280/flink_app_name.

Closes#5200

bf06d1c16 [Paul Lin] Fix engine name udf test

6aa09e462 [Paul Lin] Filter out unused conf in app mode

957d18c42 [Paul Lin] Fix test error in local mode

eaa5de9b4 [Paul Lin] Fix engine name missing in tests

109ff46f5 [Paul Lin] Fix test error

efb1cda82 [Paul Lin] Fix compatibility with YARN and local

65e6759b2 [Paul Lin] Remove unused import

49860f65e [Paul Lin] Optimize Flink application name generating

Authored-by: Paul Lin <paullin3280@gmail.com>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

- Remove the provided dependency `flink-table-planner_${scala.binary.version}` which provides a legacy table planner API on Scala, but is never used in Kyuubi's source code or in runtime directly.

- `** The legacy planner is deprecated and will be dropped in Flink 1.14.**` according to [Flink 1.13's doc of Legacy Planner](https://nightlies.apache.org/flink/flink-docs-release-1.13/docs/dev/table/legacy_planner/)

- Kyuubi has dropped support for Flink 1.14 and before in #4588

- Remove the unused provided dependency `flink-sql-parser`

- All tests on Scala 2.12 work fine without them, as `flink-table-runtime` dependency provides enough Java API for usage.

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

### _Was this patch authored or co-authored using generative AI tooling?_

No.

Closes#5222 from bowenliang123/flink-remove-planner.

Closes#5222

716ec06e9 [liangbowen] remove flink-sql-parser dependency

0922ba5af [liangbowen] Remove unnecessary dependency flink-table-planner

Authored-by: liangbowen <liangbowen@gf.com.cn>

Signed-off-by: Bowen Liang <liangbowen@gf.com.cn>

### _Why are the changes needed?_

- adding source code in `src/main/scala-2.12/**/*.scala` and `src/main/scala-2.13/**/*.scala` to including paths for Scala spotless styling

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

### _Was this patch authored or co-authored using generative AI tooling?_

No.

Closes#5209 from bowenliang123/scala-reformat.

Closes#5209

84b4205e9 [liangbowen] use wildcard for scala versions

0c32c582d [liangbowen] reformat scala-2.12 specific code

Authored-by: liangbowen <liangbowen@gf.com.cn>

Signed-off-by: liangbowen <liangbowen@gf.com.cn>

### _Why are the changes needed?_

- Use `Set` collection for order-insensitive configs instead of `Seq`

- kyuubi.frontend.thrift.binary.ssl.disallowed.protocols

- kyuubi.authentication

- kyuubi.authentication.ldap.groupFilter

- kyuubi.authentication.ldap.userFilter

- kyuubi.kubernetes.context.allow.list

- kyuubi.kubernetes.namespace.allow.list

- kyuubi.session.conf.ignore.list

- kyuubi.session.conf.restrict.list

- kyuubi.session.local.dir.allow.list

- kyuubi.batch.conf.ignore.list

- kyuubi.engine.deregister.exception.classes

- kyuubi.engine.deregister.exception.messages

- kyuubi.operation.plan.only.excludes

- kyuubi.server.limit.connections.user.unlimited.list

- kyuubi.server.administrators

- kyuubi.metrics.reporters

- Support skipping blank elements

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

### _Was this patch authored or co-authored using generative AI tooling?_

No.

Closes#5185 from bowenliang123/conf-toset.

Closes#5185

c656af78a [liangbowen] conf to set

Authored-by: liangbowen <liangbowen@gf.com.cn>

Signed-off-by: liangbowen <liangbowen@gf.com.cn>

### _Why are the changes needed?_

Currently, the name of flink bootstrap SQL is auto-generated 'collect'.

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

### _Was this patch authored or co-authored using generative AI tooling?_

No.

Closes#5190 from link3280/bootstrap_job_name.

Closes#5190

ac769295c [Paul Lin] Explicit name Flink bootstrap sql

Authored-by: Paul Lin <paullin3280@gmail.com>

Signed-off-by: Paul Lin <paullin3280@gmail.com>

### _Why are the changes needed?_

As titled.

### _How was this patch tested?_

- [x] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5134 from link3280/KYUUBI-4806.

Closes#4806

a1b74783c [Paul Lin] Optimize code style

546cfdf5b [Paul Lin] Update externals/kyuubi-flink-sql-engine/src/main/scala/org/apache/kyuubi/engine/flink/operation/FlinkOperation.scala

b6eb7af4f [Paul Lin] Update externals/kyuubi-flink-sql-engine/src/main/scala/org/apache/kyuubi/engine/flink/result/ResultSet.scala

1563fa98b [Paul Lin] Remove explicit StartRowOffset for Flink

4e61a348c [Paul Lin] Add comments

c93294650 [Paul Lin] Improve code style

6bd0c8e69 [Paul Lin] Use dedicated thread pool

15412db3a [Paul Lin] Improve logging

d6a2a9cff [Paul Lin] [KYUUBI #4806][FLINK] Implement incremental result fetching

Authored-by: Paul Lin <paullin3280@gmail.com>

Signed-off-by: Paul Lin <paullin3280@gmail.com>

### _Why are the changes needed?_

- adding a basic compilation CI test on Scala 2.13 , skipping test runs

- separate versions of `KyuubiSparkILoop` for Scala 2.12 and 2.13, adapting the changes of Scala interpreter packages

- rename `export` variable

```

[Error] /Users/bw/dev/kyuubi/kyuubi-server/src/test/scala/org/apache/kyuubi/server/api/v1/AdminResourceSuite.scala:563: Wrap `export` in backticks to use it as an identifier, it will become a keyword in Scala 3.

```

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [ ] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

### _Was this patch authored or co-authored using generative AI tooling?_

No.

Closes#5188 from bowenliang123/sparksql-213.

Closes#5188

04f192064 [liangbowen] update

9e764271b [liangbowen] add ci for compilation with server module

a465375bd [liangbowen] update

5c3f24fdf [liangbowen] update

4b6a6e339 [liangbowen] use Iterable for the row in MySQLTextResultSetRowPacket

f09d61d26 [liangbowen] use ListMap.newBuilder

6b5480872 [liangbowen] Use Iterable for collections

1abfa29d8 [liangbowen] 2.12's KyuubiSparkILoop

b1c9da591 [liangbowen] rename export variable

b6a6e077b [liangbowen] remove original KyuubiSparkILoop

15438b503 [liangbowen] move back scala 2.12's KyuubiSparkILoop

dd3244351 [liangbowen] adapt spark sql module to 2.13

62d3abbf0 [liangbowen] adapt server module to 2.13

Authored-by: liangbowen <liangbowen@gf.com.cn>

Signed-off-by: liangbowen <liangbowen@gf.com.cn>

### _Why are the changes needed?_

- Fix class mismatch when trying to compilation on Scala 2.13, due to implicit class reference to `StageInfo`. The compilation fails by type mismatching, if the compiler classloader loads Spark's `org.apache.spark.schedulerStageInfo` ahead of Kyuubi's `org.apache.spark.kyuubi.StageInfo`.

- Change var integer to AtomicInteger for `numActiveTasks` and `numCompleteTasks`, preventing possible concurrent inconsistency

```

[ERROR] [Error] /Users/bw/dev/kyuubi/externals/kyuubi-spark-sql-engine/src/main/scala/org/apache/spark/kyuubi/SQLOperationListener.scala:56: type mismatch;

found : java.util.concurrent.ConcurrentHashMap[org.apache.spark.kyuubi.StageAttempt,org.apache.spark.scheduler.StageInfo]

required: java.util.concurrent.ConcurrentHashMap[org.apache.spark.kyuubi.StageAttempt,org.apache.spark.kyuubi.StageInfo]

[INFO] [Info] : java.util.concurrent.ConcurrentHashMap[org.apache.spark.kyuubi.StageAttempt,org.apache.spark.scheduler.StageInfo] <: java.util.concurrent.ConcurrentHashMap[org.apache.spark.kyuubi.StageAttempt,org.apache.spark.kyuubi.StageInfo]?

[INFO] [Info] : false

[ERROR] [Error] /Users/bw/dev/kyuubi/externals/kyuubi-spark-sql-engine/src/main/scala/org/apache/spark/kyuubi/SQLOperationListener.scala:126: not enough arguments for constructor StageInfo: (stageId: Int, attemptId: Int, name: String, numTasks: Int, rddInfos: Seq[org.apache.spark.storage.RDDInfo], parentIds: Seq[Int], details: String, taskMetrics: org.apache.spark.executor.TaskMetrics, taskLocalityPreferences: Seq[Seq[org.apache.spark.scheduler.TaskLocation]], shuffleDepId: Option[Int], resourceProfileId: Int, isPushBasedShuffleEnabled: Boolean, shuffleMergerCount: Int): org.apache.spark.scheduler.StageInfo.

Unspecified value parameters name, numTasks, rddInfos...

[ERROR] [Error] /Users/bw/dev/kyuubi/externals/kyuubi-spark-sql-engine/src/main/scala/org/apache/spark/kyuubi/SQLOperationListener.scala:148: value numActiveTasks is not a member of org.apache.spark.scheduler.StageInfo

[ERROR] [Error] /Users/bw/dev/kyuubi/externals/kyuubi-spark-sql-engine/src/main/scala/org/apache/spark/kyuubi/SQLOperationListener.scala:156: value numActiveTasks is not a member of org.apache.spark.scheduler.StageInfo

[ERROR] [Error] /Users/bw/dev/kyuubi/externals/kyuubi-spark-sql-engine/src/main/scala/org/apache/spark/kyuubi/SQLOperationListener.scala:158: value numCompleteTasks is not a member of org.apache.spark.scheduler.StageInfo

```

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [ ] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

### _Was this patch authored or co-authored using generative AI tooling?_

No.

Closes#5184 from bowenliang123/spark-stage-info.

Closes#5184

fd0b9b564 [liangbowen] update

d410491f3 [liangbowen] rename Kyuubi's StageInfo to SparkStageInfo preventing class mismatch

Authored-by: liangbowen <liangbowen@gf.com.cn>

Signed-off-by: liangbowen <liangbowen@gf.com.cn>

### _Why are the changes needed?_

- Replace deprecated class alias `scala.tools.nsc.interpreter.IR` and `scala.tools.nsc.interpreter.JPrintWriter` in Scala 2.13 with equivalent classes `scala.tools.nsc.interpreter.Results` and `java.io.PrintWriter`

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

### _Was this patch authored or co-authored using generative AI tooling?_

Closes#5180 from bowenliang123/interpreter-213.

Closes#5180

e76f1f05a [liangbowen] prevent to use deprecated classes in the package scala.tools.nsc.interpreter of Scala 2.13

Authored-by: liangbowen <liangbowen@gf.com.cn>

Signed-off-by: liangbowen <liangbowen@gf.com.cn>

### _Why are the changes needed?_

- Change hardcoded Scala's version 2.12 in Maven module's `artifactId` to placeholder `scala.binary.version` which is defined in project parent pom as 2.12

- Preparation for Scala 2.13/3.x support in the future

- No impact on using or building Maven modules

- Some ignorable warning messages for unstable artifactId will be thrown by Maven.

```

Warning: Some problems were encountered while building the effective model for org.apache.kyuubi:kyuubi-server_2.12🫙1.8.0-SNAPSHOT

Warning: 'artifactId' contains an expression but should be a constant

```

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

### _Was this patch authored or co-authored using generative AI tooling?_

No.

Closes#5175 from bowenliang123/artifactId-scala.

Closes#5177

2eba29cfa [liangbowen] use placeholder of scala binary version for artifactId

Authored-by: liangbowen <liangbowen@gf.com.cn>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

Currently, `getNextRowSetInternal` returns `TRowSet` which is not friendly to explicit EOS in streaming result fetch.

This PR changes the return type to `TFetchResultsResp` to allow the engines to determine the EOS.

### _How was this patch tested?_

- [x] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5160 from link3280/refactor_result.

Closes#5160

09822f2ee [Paul Lin] Fix hasMoreRows missing

c94907e2b [Paul Lin] Explicitly set `resp.setHasMoreRows(false)` for operations

4d193fb1d [Paul Lin] Revert unrelated changes in FlinkOperation

ffd0367b3 [Paul Lin] Refactor getNextRowSetInternal to support fetch streaming data

Authored-by: Paul Lin <paullin3280@gmail.com>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

- extract development scripts for regenerating and verifying the golden files

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5121 from bowenliang123/gen-golden.

Closes#5121

a8fb20046 [liangbowen] add golden result file path hint in comment

444e5aff9 [liangbowen] nit

eec4fe84f [liangbowen] use the generation scripts for running test suites.

5a97785e6 [liangbowen] fix class name in gen_ranger_spec_json.sh

3be9aacf5 [liangbowen] comments

32993de43 [liangbowen] comments

ca0090909 [liangbowen] update scripts

6439ad3e9 [liangbowen] update scripts

f26d77935 [liangbowen] add scripts for golden files' generation

Authored-by: liangbowen <liangbowen@gf.com.cn>

Signed-off-by: liangbowen <liangbowen@gf.com.cn>

### _Why are the changes needed?_

Before this PR, the ip address obtained in the K8s environment is incorrect, and `spark.driver.host` cannot be manually specified.

This time pr will adjust the way the IP is obtained and support the external parameter `spark.driver.host`

### _How was this patch tested?_

It has been tested in the local environment and verified as expected

Add screenshots for manual tests if appropriate

Closes#5148 from fantasticKe/feat_k8s_driver_ip.

Closes#5148

330f5f93d [marcoluo] feat: 修改k8s模式下获取spark.driver.host的方式

Authored-by: marcoluo <marcoluo@verizontal.com>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

- allow using the session's user/password to connect database in the JDBC engine

- it is allowed to be applied on any share level of engines, since every kyuubi session maintains a dedicated JDBC connection in the current implementation

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5120 from bowenliang123/jdbc-user.

Closes#5120

7b8ebd137 [liangbowen] Use session's user and password to connect to database in JDBC engine

Authored-by: liangbowen <liangbowen@gf.com.cn>

Signed-off-by: liangbowen <liangbowen@gf.com.cn>

### _Why are the changes needed?_

- use `ListBuffer` instead of `ArrayBuffer` for inner string buffer, to minimize allocations for resizing

- handy `+=` operator in chaining style without explicit quotes, to make the user focus on content assembly and less distraction of quoting

- make `MarkdownBuilder` extending `Growable`, to utilize semantic operators like `+=` and `++=` which is unified inside for single or batch operation

- use `this +=` rather than `line(...)` , to reflect side effects in semantic way

- change list to stream for output comparison

- remove unused methods

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [ ] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5127 from bowenliang123/md-buff.

Closes#5127

458e18c3d [liangbowen] Improvements for markdown builder

Authored-by: liangbowen <liangbowen@gf.com.cn>

Signed-off-by: liangbowen <liangbowen@gf.com.cn>

### _Why are the changes needed?_

close#5105

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [ ] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5128 from lsm1/features/kyuubi_5105.

Closes#5105

84be7fd6d [senmiaoliu] distinct default namespace

Authored-by: senmiaoliu <senmiaoliu@trip.com>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

Support SSL for trino engine.

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [X] [Run test](https://kyuubi.apache.org/docs/latest/develop_tools/testing.html#running-tests) locally before make a pull request

Closes#3374 from hddong/support-trino-password.

Closes#3374

f39daaf78 [Cheng Pan] improve

6308c4cf7 [hongdongdong] Support SSL for trino engine

Lead-authored-by: Cheng Pan <chengpan@apache.org>

Co-authored-by: hongdongdong <hongdd@apache.org>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

close#5122

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [ ] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5125 from lsm1/features/kyuubi_5122.

Closes#5122

02d0769cc [senmiaoliu] add hive kdf docs

Authored-by: senmiaoliu <senmiaoliu@trip.com>

Signed-off-by: liangbowen <liangbowen@gf.com.cn>

### _Why are the changes needed?_

close#4940

### _How was this patch tested?_

- [x] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [ ] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5110 from lsm1/features/kyuubi_4940.

Closes#4940

6c0a9a37f [senmiaoliu] add kdf for hive engine

Authored-by: senmiaoliu <senmiaoliu@trip.com>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

As titled.

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5109 from link3280/bootstrap_file_not_found.

Closes#5108

318199fa2 [Paul Lin] [KYUUBI #5108][Flink] Fix iFileNotFoundException during Flink engine bootstrap

Authored-by: Paul Lin <paullin3280@gmail.com>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

As titled.

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5107 from link3280/engine_fatal_log.

Closes#5106

db45392d1 [Paul Lin] [KYUUBI #5106][Flink] Improve logs for fatal errors

Authored-by: Paul Lin <paullin3280@gmail.com>

Signed-off-by: Paul Lin <paullin3280@gmail.com>

### _Why are the changes needed?_

close#5076

### _How was this patch tested?_

- [x] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [ ] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5102 from lsm1/features/kyuubi_5076.

Closes#5076

ce7cfe678 [senmiaoliu] kdf support engine url

Authored-by: senmiaoliu <senmiaoliu@trip.com>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

- Support initializing or comparing version with major version only, e.g "3" equivalent to "3.0"

- Remove redundant version comparison methods by using semantic versions of Spark, Flink and Kyuubi

- adding common `toDouble` method

### _How was this patch tested?_

- [x] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5039 from bowenliang123/improve-semanticversion.

Closes#5039

b6868264f [liangbowen] nit

d39646b7d [liangbowen] SPARK_ENGINE_RUNTIME_VERSION

9148caad0 [liangbowen] use semantic versions

ecc3b4af6 [mans2singh] [KYUUBI #5086] [KYUUBI # 5085] Update config section of deploy on kubernetes

Lead-authored-by: liangbowen <liangbowen@gf.com.cn>

Co-authored-by: mans2singh <mans2singh@yahoo.com>

Signed-off-by: liangbowen <liangbowen@gf.com.cn>

### _Why are the changes needed?_

close#5090

### _How was this patch tested?_

After this PR it generates normal settings file in windows.

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [ ] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5091 from wForget/KYUUBI-5090.

Closes#5090

9e974c7f8 [wforget] fix

dc1ebfc08 [wforget] fix

2cbec60f9 [wforget] [KYUUBI-5090] Fix AllKyuubiConfiguration to generate redundant blank lines in Windows

ecc3b4af6 [mans2singh] [KYUUBI #5086] [KYUUBI # 5085] Update config section of deploy on kubernetes

Lead-authored-by: wforget <643348094@qq.com>

Co-authored-by: mans2singh <mans2singh@yahoo.com>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

As titled.

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5082 from link3280/KYUUBI-5080.

Closes#5080

e8026b89b [Paul Lin] [KYUUBI #4806][FLINK] Improve logs

fd78f3239 [Paul Lin] [KYUUBI #4806][FLINK] Fix gateway NPE

a0a7c4422 [Cheng Pan] Update externals/kyuubi-flink-sql-engine/src/main/java/org/apache/flink/client/deployment/application/executors/EmbeddedExecutorFactory.java

50830d4d4 [Paul Lin] [KYUUBI #5080][FLINK] Fix EmbeddedExecutorFactory not thread-safe during bootstrap

Lead-authored-by: Paul Lin <paullin3280@gmail.com>

Co-authored-by: Cheng Pan <pan3793@gmail.com>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

to fix

```

SparkDeltaOperationSuite:

org.apache.kyuubi.engine.spark.operation.SparkDeltaOperationSuite *** ABORTED ***

java.lang.RuntimeException: Unable to load a Suite class org.apache.kyuubi.engine.spark.operation.SparkDeltaOperationSuite that was discovered in the runpath: Not Support spark version (4,0)

at org.scalatest.tools.DiscoverySuite$.getSuiteInstance(DiscoverySuite.scala:80)

at org.scalatest.tools.DiscoverySuite.$anonfun$nestedSuites$1(DiscoverySuite.scala:38)

at scala.collection.TraversableLike.$anonfun$map$1(TraversableLike.scala:286)

at scala.collection.Iterator.foreach(Iterator.scala:943)

at scala.collection.Iterator.foreach$(Iterator.scala:943)

at scala.collection.AbstractIterator.foreach(Iterator.scala:1431)

at scala.collection.IterableLike.foreach(IterableLike.scala:74)

at scala.collection.IterableLike.foreach$(IterableLike.scala:73)

at scala.collection.AbstractIterable.foreach(Iterable.scala:56)

at scala.collection.TraversableLike.map(TraversableLike.scala:286)

...

Cause: java.lang.IllegalArgumentException: Not Support spark version (4,0)

at org.apache.kyuubi.engine.spark.WithSparkSQLEngine.$init$(WithSparkSQLEngine.scala:42)

at org.apache.kyuubi.engine.spark.operation.SparkDeltaOperationSuite.<init>(SparkDeltaOperationSuite.scala:25)

at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at java.lang.Class.newInstance(Class.java:442)

at org.scalatest.tools.DiscoverySuite$.getSuiteInstance(DiscoverySuite.scala:66)

at org.scalatest.tools.DiscoverySuite.$anonfun$nestedSuites$1(DiscoverySuite.scala:38)

at scala.collection.TraversableLike.$anonfun$map$1(TraversableLike.scala:286)

...

```

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5075 from cfmcgrady/spark-4.0.

Closes#5075

ad38c0d98 [Fu Chen] refine test to adapt Spark 4.0

Authored-by: Fu Chen <cfmcgrady@gmail.com>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

Please refer to #4997

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [x] Add screenshots for manual tests if appropriate

1. connect to KyuubiServer with beeline

2. Confirm the Application is ACCEPTed in ResourceManager, Restart KyuubiServer

3. Confirmed that Engine was terminated shortly

```

23/06/28 10:44:59 INFO storage.BlockManagerMaster: Removed 1 successfully in removeExecutor

23/06/28 10:45:00 INFO spark.SparkSQLEngine: Current open session is 0

23/06/28 10:45:00 ERROR spark.SparkSQLEngine: Spark engine has been terminated because no incoming connection for more than 60000 ms, deregistering from engine discovery space.

23/06/28 10:45:00 WARN zookeeper.ZookeeperDiscoveryClient: This Kyuubi instance lniuhpi1616.nhnjp.ism:46588 is now de-registered from ZooKeeper. The server will be shut down after the last client session completes.

23/06/28 10:45:00 INFO spark.SparkSQLEngine: Service: [SparkTBinaryFrontend] is stopping.

```

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5002 from risyomei/feature/failfast.

Closes#5002

402d6c01f [Xieming LI] Changed runInNewThread based on comment

58f11e157 [Xieming LI] Changed runInNewThread to non-blocking

c6bb02d6a [Xieming LI] Fixed Unit Test

168d996d0 [Xieming LI] Start countdown after engine is started

48ee819f2 [Xieming LI] Fixed a typo

a8d305942 [Xieming LI] Using runInNewThread ported from Spark

21f0671df [Xieming LI] Updated document

a7d5d1082 [Xieming LI] Changed the default value to turn off this feature

437be512d [Xieming LI] Trigger CI to test agagin

42a847e84 [Xieming LI] Added Configuration for timeout, changed to ThreadPoolExecutor

639bd5239 [Xieming LI] Fail the engine fast when no incoming connection in CONNECTION mode

Authored-by: Xieming LI <risyomei@gmail.com>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

I came cross an issue that is the the value of EXECUTOR_POD_NAME_PREFIX_MAX_LENGTH is 0 when the param is accessed in generateExecutorPodNamePrefixForK8s method.

I tried to move the EXECUTOR_POD_NAME_PREFIX_MAX_LENGTH ahead of generateExecutorPodNamePrefixForK8s method. Then, I found this issue was gone.

So is it necessary to declare the EXECUTOR_POD_NAME_PREFIX_MAX_LENGTH variable before the method definition?

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [ ] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5045 from zhaohehuhu/Improvement.

Closes#5045

c74732f0c [hezhao2] recover the blank line

99aa14b0c [hezhao2] fix the code style

29929a2cb [hezhao2] declare EXECUTOR_POD_NAME_PREFIX_MAX_LENGTH param before generateExecutorPodNamePrefixForK8s method

Authored-by: hezhao2 <hezhao2@cisco.com>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

This PR aims to support `getQueryId` in Spark engine. It get `sparl.sql.execution.id` by adding a Listener.

### _How was this patch tested?_

- [x] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [ ] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5037 from Yikf/spark-queryid.

Closes#5030

9f2b5a3cb [yikaifei] Support get query id in Spark engine

Authored-by: yikaifei <yikaifei@apache.org>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

close#5035

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [x] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5038 from lsm1/features/kyuubi_5035.

Closes#5035

9ee06f113 [senmiaoliu] fix style

c68fd65ab [senmiaoliu] fix style

5d3d86972 [senmiaoliu] show session end time

Authored-by: senmiaoliu <senmiaoliu@trip.com>

Signed-off-by: liangbowen <liangbowen@gf.com.cn>

### _Why are the changes needed?_

As titled.

### _How was this patch tested?_

- [x] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5014 from link3280/KYUUBI-4938.

Closes#4938

c43d480f9 [Paul Lin] [KYUUBI #4938][FLINK] Update function description

0dd991f03 [Paul Lin] [KYUUBI #4938][FLINK] Fix compatibility problems with Flink 1.16

7e6a3b184 [Paul Lin] [KYUUBI #4938][FLINK] Fix inconsistent istant in engine names

6ecde4c60 [Paul Lin] [KYUUBI #4938][FLINK] Implement Kyuubi UDF in Flink engine

Authored-by: Paul Lin <paullin3280@gmail.com>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

Adding compilation details info in SparkUI's Engine tab

- shows details of kyuubi version, including revision, revision time and branch

- show compilation version of Spark, Scala, Hadoop and Hive

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [x] Add screenshots for manual tests if appropriate

- [ ] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5019 from bowenliang123/enginetab-info.

Closes#5019

3ea108bd6 [liangbowen] shows Compilation Info in SparkUI's Engine tab

Authored-by: liangbowen <liangbowen@gf.com.cn>

Signed-off-by: liangbowen <liangbowen@gf.com.cn>

### _Why are the changes needed?_

- remove unnecessary blank classSparkSimpleStatsReportListener

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [ ] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5008 from bowenliang123/5007-followup.

Closes#5007

34e19f664 [liangbowen] remove blank SparkSimpleStatsReportListener

Authored-by: liangbowen <liangbowen@gf.com.cn>

Signed-off-by: liangbowen <liangbowen@gf.com.cn>

### _Why are the changes needed?_

- Scalafmt 3.7.5 release note: https://github.com/scalameta/scalafmt/releases/tag/v3.7.5

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5007 from bowenliang123/scalafmt-3.7.5.

Closes#5007

f3f7163a4 [liangbowen] Bump Scalafmt from 3.7.4 to 3.7.5

Authored-by: liangbowen <liangbowen@gf.com.cn>

Signed-off-by: liangbowen <liangbowen@gf.com.cn>

### _Why are the changes needed?_

close#5005

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [ ] [Run test](https://kyuubi.readthedocs.io/en/master/contributing/code/testing.html#running-tests) locally before make a pull request

Closes#5006 from QianyongY/features/kyuubi-5005.

Closes#5005

3bc8b7482 [yongqian] [KYUUBI #5005] Remove default settings

Authored-by: yongqian <yongqian@trip.com>

Signed-off-by: fwang12 <fwang12@ebay.com>

### _Why are the changes needed?_

- Remove redundant quoteIfNeeded method

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/develop_tools/testing.html#running-tests) locally before make a pull request

Closes#4973 from bowenliang123/redundant-quoteifneeded.

Closes#4937

acec0fb09 [liangbowen] Remove redundant quoteIfNeeded method

Authored-by: liangbowen <liangbowen@gf.com.cn>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

- To improve Scala code with corrections, simplification, scala style, redundancy cleaning-up. No feature changes introduced.

Corrections:

- Class doesn't correspond to file name (SparkListenerExtensionTest)

- Correct package name in ResultSetUtil and PySparkTests

Improvements:

- 'var' could be a 'val'

- GetOrElse(null) to orNull

Cleanup & Simplification:

- Redundant cast inspection

- Redundant collection conversion

- Simplify boolean expression

- Redundant new on case class

- Redundant return

- Unnecessary parentheses

- Unnecessary partial function

- Simplifiable empty check

- Anonymous function convertible to a method value

Scala Style:

- Constructing range for seq indices

- Get and getOrElse to getOrElse

- Convert expression to Single Abstract Method (SAM)

- Scala unnecessary semicolon inspection

- Map and getOrElse(false) to exists

- Map and flatten to flatMap

- Null initializer can be replaced by _

- scaladoc link to method

Other Improvements:

- Replace map and getOrElse(true) with forall

- Unit return type in the argument of map

- Size to length on arrays and strings

- Type check can be pattern matching

- Java mutator method accessed as parameterless

- Procedure syntax in method definition

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [ ] [Run test](https://kyuubi.readthedocs.io/en/master/develop_tools/testing.html#running-tests) locally before make a pull request

Closes#4959 from bowenliang123/scala-Improve.

Closes#4959

2d36ff351 [liangbowen] code improvement for Scala

Authored-by: liangbowen <liangbowen@gf.com.cn>

Signed-off-by: liangbowen <liangbowen@gf.com.cn>

### _Why are the changes needed?_

- comment https://github.com/apache/kyuubi/pull/4963#discussion_r1230490326

- simplify reflection calling with unified `invokeAs` / `getField` method for either declared, inherited, or static methods / fields

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/develop_tools/testing.html#running-tests) locally before make a pull request

Closes#4970 from bowenliang123/unify-invokeas.

Closes#4970

592833459 [liangbowen] Revert "dedicate invokeStaticAs method"

ad45ff3fd [liangbowen] dedicate invokeStaticAs method

f08528c0f [liangbowen] nit

42aeb9fcf [liangbowen] add ut case

b5b384120 [liangbowen] nit

072add599 [liangbowen] add ut

8d019ab35 [liangbowen] unified invokeAs and getField

Authored-by: liangbowen <liangbowen@gf.com.cn>

Signed-off-by: liangbowen <liangbowen@gf.com.cn>

### _Why are the changes needed?_

to close#4937.

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/develop_tools/testing.html#running-tests) locally before make a pull request

Closes#4963 from bowenliang123/remove-spark-shim.

Closes#4937

e6593b474 [liangbowen] remove unnecessary row

2481e3317 [liangbowen] remove SparkCatalogShim

Authored-by: liangbowen <liangbowen@gf.com.cn>

Signed-off-by: liangbowen <liangbowen@gf.com.cn>

### _Why are the changes needed?_

When the default databases of glue do not exist, initializing the engine session failed.

```

Caused by: software.amazon.awssdk.services.glue.model.AccessDeniedException: User: arn:aws:iam::xxxxx:user/vault-token-wap-udp-int-readwrite-1686560253-eVgl3oauuaB7v0V6wX9 is not authorized to perform: glue:GetDatabase on resource: arn:aws:glue:us-east-1:xxxxx:database/default because no identity-based policy allows the glue:GetDatabase action (Service: Glue, Status Code: 400, Request ID: 3f816608-0cb2-467b-9181-05c5bfcd29b3, Extended Request ID: null)

```

The default initialize sql "SHOW DATABASES" when Kyuubi spark accesses the glue catalog. Try to change the initialize sql "use glue.wap" has the same error.

```

kyuubi.engine.initialize.sql=use glue.wap

kyuubi.engine.session.initialize.sql=use glue.wap

```

The root cause is that hive defines a use:database, which will initialize access to the database default.

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/develop_tools/testing.html#running-tests) locally before make a pull request

Closes#4952 from dev-lpq/glue_database.

Closes#4952

8f0951d4d [pengqli] add Glue database

90e47712f [pengqli] enhance AWS Glue database

Authored-by: pengqli <pengqli@cisco.com>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

close https://github.com/apache/kyuubi/issues/4881

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/develop_tools/testing.html#running-tests) locally before make a pull request

Closes#4886 from rhh777/jdbcengine.

Closes#4881

e0439f8e6 [haorenhui] [KYUUBI #4881] update settings.md

d667d8fe8 [haorenhui] [KYUUBI #4881] update conf/docs

cba06b4b9 [haorenhui] [KYUUBI #4881] simplify code

80be4d27c [haorenhui] [KYUUBI #4881] fix style

4f0fa3ab2 [haorenhui] [KYUUBI #4881] JDBCEngine performs initialization sql

Authored-by: haorenhui <haorenhui@kingsoft.com>

Signed-off-by: Cheng Pan <chengpan@apache.org>

### _Why are the changes needed?_

For the operation getNextRowSet method, we shall add lock for it.

For example, for spark operation, the result iterator is not thread-safe, it might throw exception(if the jdbc client to kyuubi server connection socket timeout).

For incremental collect mode, the fetchResult might trigger a spark task to collect the incremental result(`self.next().toIterator`).

The jdbc client to kyuubi gateway timeout, but the fetchResult request has been sent to engine.

Then the jdbc client re-send the fetchResult request.

And the getNextResultSet in spark engine side concurrent execute.

And the result iterator is not thread-safe and might cause NPE.

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/develop_tools/testing.html#running-tests) locally before make a pull request

Closes#4949 from turboFei/lock_next_rowset.

Closes#4949

8f18f3236 [fwang12] getNextRowSetInternal and withLockRequired

Authored-by: fwang12 <fwang12@ebay.com>

Signed-off-by: fwang12 <fwang12@ebay.com>

### _Why are the changes needed?_

- apply the usage of `ReflectUtils` and `Dyn*` to the modules of engines and plugins (eg. Spark engine, Authz plugin, lineage plugin, beeline)

- remove similar redundant methods for calling reflected methods or getting field values

- unified reflection helper methods with type casting support, as `getField[T]` for getting field values from `getFields`, `invokeAs[T]` for invoking methods in `getMethods`.

### _How was this patch tested?_

- [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [ ] Add screenshots for manual tests if appropriate

- [x] [Run test](https://kyuubi.readthedocs.io/en/master/develop_tools/testing.html#running-tests) locally before make a pull request

Closes#4879 from bowenliang123/reflect-use.

Closes#4879

c685fb67d [liangbowen] bug fix for "Cannot bind static field options" when executing "bin/beeline"

fc1fdf1de [liangbowen] import

59c3dd032 [liangbowen] comment

c435c131d [liangbowen] reflect util usage

Authored-by: liangbowen <liangbowen@gf.com.cn>

Signed-off-by: liangbowen <liangbowen@gf.com.cn>

### _Why are the changes needed?_

to adapt Spark 3.5, the new conf `spark.sql.execution.arrow.useLargeVarType` was introduced in https://github.com/apache/spark/pull/39572

the signature of function `ArrowUtils#toArrowSchema` before

```scala

def toArrowSchema(

schema: StructType,

timeZoneId: String,

errorOnDuplicatedFieldNames: Boolean): Schema