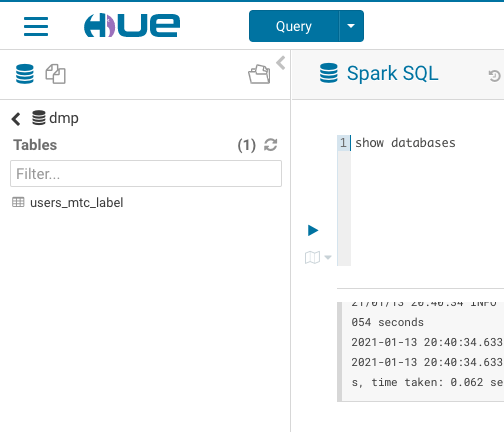

[](https://github.com/yaooqinn/kyuubi/pull/292)  [❨?❩](https://pullrequestbadge.com/?utm_medium=github&utm_source=yaooqinn&utm_campaign=badge_info)<!-- PR-BADGE: PLEASE DO NOT REMOVE THIS COMMENT --> <!-- Thanks for sending a pull request! Here are some tips for you: 1. If this is your first time, please read our contributor guidelines: https://kyuubi.readthedocs.io/en/latest/community/contributions.html --> <!-- replace ${issue ID} with the actual issue id --> Fixes #280 closes #292 <!-- Please clarify why the changes are needed. For instance, 1. If you add a feature, you can talk about the user case of it. 2. If you fix a bug, you can clarify why it is a bug. --> Before this PR, Kyuubi treat Operation GET_SCHEMAS * as an invalid Operation then cause HUE list databases failed, but HiveServer2 will return all databases on same request. - [x] Add some test cases that check the changes thoroughly including negative and positive cases if possible - [x] Add screenshots for manual tests if appropriate - [x] [Run test](https://kyuubi.readthedocs.io/en/latest/tools/testing.html#running-tests) locally before make a pull request After PR:  Squashed commit of the following: commit f56fdc3fe35b4aa5a7b6f09cbbaa023ec713e2fe Author: Cheng Pan <379377944@qq.com> Date: Sun Jan 17 23:27:03 2021 +0800 [KYUUBI-280] code style and ut commit ff74dbaf3e6d5606e5a2171e6dcc3628aa631eea Author: Cheng Pan <379377944@qq.com> Date: Sun Jan 17 23:08:59 2021 +0800 [KYUUBI-280] remove comments and handle schema * in other place commit a8bcf98cd70e36903ece654e1c37d08e868b1c5b Author: Cheng Pan <379377944@qq.com> Date: Sun Jan 17 13:47:24 2021 +0800 [KYUUBI-280] Align Operation GET_SCHEMAS * behavior with Hive

This commit is contained in:

parent

3a147f834b

commit

6f8564ce59

@ -21,6 +21,7 @@ import java.util.regex.Pattern

|

||||

|

||||

import scala.collection.mutable.ArrayBuffer

|

||||

|

||||

import org.apache.commons.lang3.StringUtils

|

||||

import org.apache.spark.sql.{Row, SparkSession}

|

||||

import org.apache.spark.sql.types.{ArrayType, BinaryType, BooleanType, ByteType, CalendarIntervalType, DataType, DateType, DecimalType, DoubleType, FloatType, IntegerType, LongType, MapType, NullType, NumericType, ShortType, StringType, StructField, StructType, TimestampType}

|

||||

|

||||

@ -187,7 +188,9 @@ class GetColumns(

|

||||

|

||||

val gviews = new ArrayBuffer[Row]()

|

||||

val globalTmpDb = catalog.globalTempViewManager.database

|

||||

if (Pattern.compile(schemaPattern).matcher(globalTmpDb).matches()) {

|

||||

if (StringUtils.isEmpty(schemaName) || schemaName == "*"

|

||||

|| Pattern.compile(convertSchemaPattern(schemaName, false))

|

||||

.matcher(globalTmpDb).matches()) {

|

||||

catalog.globalTempViewManager.listViewNames(tablePattern).foreach { v =>

|

||||

catalog.globalTempViewManager.get(v).foreach { plan =>

|

||||

plan.schema.zipWithIndex

|

||||

|

||||

@ -19,6 +19,7 @@ package org.apache.kyuubi.engine.spark.operation

|

||||

|

||||

import java.util.regex.Pattern

|

||||

|

||||

import org.apache.commons.lang3.StringUtils

|

||||

import org.apache.spark.sql.{Row, SparkSession}

|

||||

import org.apache.spark.sql.types.StructType

|

||||

|

||||

@ -44,8 +45,9 @@ class GetSchemas(spark: SparkSession, session: Session, catalogName: String, sch

|

||||

val schemaPattern = convertSchemaPattern(schema)

|

||||

val databases = spark.sessionState.catalog.listDatabases(schemaPattern)

|

||||

val globalTmpViewDb = spark.sessionState.catalog.globalTempViewManager.database

|

||||

if (Pattern.compile(convertSchemaPattern(schema, false))

|

||||

.matcher(globalTmpViewDb).matches()) {

|

||||

if (StringUtils.isEmpty(schema) || schema == "*"

|

||||

|| Pattern.compile(convertSchemaPattern(schema, false))

|

||||

.matcher(globalTmpViewDb).matches()) {

|

||||

iter = (databases :+ globalTmpViewDb).map(Row(_, "")).toList.iterator

|

||||

} else {

|

||||

iter = databases.map(Row(_, "")).toList.iterator

|

||||

|

||||

@ -19,6 +19,7 @@ package org.apache.kyuubi.engine.spark.operation

|

||||

|

||||

import java.util.regex.Pattern

|

||||

|

||||

import org.apache.commons.lang3.StringUtils

|

||||

import org.apache.spark.sql.{Row, SparkSession}

|

||||

import org.apache.spark.sql.catalyst.catalog.CatalogTableType

|

||||

import org.apache.spark.sql.types.StructType

|

||||

@ -51,7 +52,7 @@ class GetTables(

|

||||

.add(TABLE_NAME, "string", nullable = true, "Table name.")

|

||||

.add(TABLE_TYPE, "string", nullable = true, "The table type, e.g. \"TABLE\", \"VIEW\"")

|

||||

.add(REMARKS, "string", nullable = true, "Comments about the table.")

|

||||

.add("TYPE_CAT", "string", nullable = true, "The types catalog." )

|

||||

.add("TYPE_CAT", "string", nullable = true, "The types catalog.")

|

||||

.add("TYPE_SCHEM", "string", nullable = true, "the types schema (may be null)")

|

||||

.add("TYPE_NAME", "string", nullable = true, "Type name.")

|

||||

.add("SELF_REFERENCING_COL_NAME", "string", nullable = true,

|

||||

@ -82,8 +83,9 @@ class GetTables(

|

||||

|

||||

val views = if (matched(CatalogTableType.VIEW)) {

|

||||

val globalTempViewDb = catalog.globalTempViewManager.database

|

||||

(if (Pattern.compile(convertSchemaPattern(schema, datanucleusFormat = false))

|

||||

.matcher(globalTempViewDb).matches()) {

|

||||

(if (StringUtils.isEmpty(schema) || schema == "*"

|

||||

|| Pattern.compile(convertSchemaPattern(schema, datanucleusFormat = false))

|

||||

.matcher(globalTempViewDb).matches()) {

|

||||

catalog.listTables(globalTempViewDb, tablePattern, includeLocalTempViews = true)

|

||||

} else {

|

||||

catalog.listLocalTempViews(tablePattern)

|

||||

|

||||

@ -84,8 +84,8 @@ class SparkOperationSuite extends WithSparkSQLEngine {

|

||||

|

||||

val metaData = statement.getConnection.getMetaData

|

||||

|

||||

Seq("%", null, ".*", "c.*") foreach { pattern =>

|

||||

val rowSet = metaData.getColumns("", dftSchema, tableName, pattern)

|

||||

Seq("%", null, ".*", "c.*") foreach { columnPattern =>

|

||||

val rowSet = metaData.getColumns("", dftSchema, tableName, columnPattern)

|

||||

|

||||

import java.sql.Types._

|

||||

val expectedJavaTypes = Seq(BOOLEAN, TINYINT, SMALLINT, INTEGER, BIGINT, FLOAT, DOUBLE,

|

||||

@ -137,8 +137,8 @@ class SparkOperationSuite extends WithSparkSQLEngine {

|

||||

assert(pos === 18, "all columns should have been verified")

|

||||

}

|

||||

|

||||

val e = intercept[HiveSQLException](metaData.getColumns(null, "*", null, null))

|

||||

assert(e.getCause.getMessage contains "Dangling meta character '*' near index 0\n*\n^")

|

||||

val rowSet = metaData.getColumns(null, "*", "not_exist", "not_exist")

|

||||

assert(!rowSet.next())

|

||||

|

||||

val e1 = intercept[HiveSQLException](metaData.getColumns(null, null, null, "*"))

|

||||

assert(e1.getCause.getMessage contains "Dangling meta character '*' near index 0\n*\n^")

|

||||

|

||||

@ -106,7 +106,7 @@ trait JDBCTests extends KyuubiFunSuite {

|

||||

dbs.foreach(db => statement.execute(s"CREATE DATABASE IF NOT EXISTS $db"))

|

||||

val metaData = statement.getConnection.getMetaData

|

||||

|

||||

Seq("", "%", null, ".*", "_*", "_%", ".%") foreach { pattern =>

|

||||

Seq("", "*", "%", null, ".*", "_*", "_%", ".%") foreach { pattern =>

|

||||

checkResult(metaData.getSchemas(null, pattern), dbs ++ dbDflts)

|

||||

}

|

||||

|

||||

@ -120,9 +120,6 @@ trait JDBCTests extends KyuubiFunSuite {

|

||||

|

||||

checkResult(metaData.getSchemas(null, "db1"), Seq("db1"))

|

||||

checkResult(metaData.getSchemas(null, "db_not_exist"), Seq.empty)

|

||||

|

||||

val e = intercept[HiveSQLException](metaData.getSchemas(null, "*"))

|

||||

assert(e.getCause.getMessage contains "Dangling meta character '*' near index 0\n*\n^")

|

||||

}

|

||||

}

|

||||

|

||||

@ -166,25 +163,33 @@ trait JDBCTests extends KyuubiFunSuite {

|

||||

}

|

||||

assert(i === 4)

|

||||

|

||||

val rs3 = metaData.getTables(null, null, "table%", Array("VIEW"))

|

||||

assert(!rs3.next())

|

||||

val rs3 = metaData.getTables(null, "*", "*", Array("VIEW"))

|

||||

i = 2

|

||||

while(rs3.next()) {

|

||||

assert(rs3.getString(TABLE_NAME) == tables(i))

|

||||

i += 1

|

||||

}

|

||||

assert(i === 4)

|

||||

|

||||

val rs4 = metaData.getTables(null, null, "table%", Array("TABLE"))

|

||||

val rs4 = metaData.getTables(null, null, "table%", Array("VIEW"))

|

||||

assert(!rs4.next())

|

||||

|

||||

val rs5 = metaData.getTables(null, "*", "table%", Array("VIEW"))

|

||||

assert(!rs5.next())

|

||||

|

||||

val rs6 = metaData.getTables(null, null, "table%", Array("TABLE"))

|

||||

i = 0

|

||||

while(rs4.next()) {

|

||||

assert(rs4.getString(TABLE_NAME) == tables(i))

|

||||

while(rs6.next()) {

|

||||

assert(rs6.getString(TABLE_NAME) == tables(i))

|

||||

i += 1

|

||||

}

|

||||

assert(i === 2)

|

||||

|

||||

val rs5 = metaData.getTables(null, "default", "%", Array("VIEW"))

|

||||

val rs7 = metaData.getTables(null, "default", "%", Array("VIEW"))

|

||||

i = 2

|

||||

while(rs5.next()) {

|

||||

assert(rs5.getString(TABLE_NAME) == view_test)

|

||||

while(rs7.next()) {

|

||||

assert(rs7.getString(TABLE_NAME) == view_test)

|

||||

}

|

||||

|

||||

val e = intercept[HiveSQLException](metaData.getTables(null, "*", null, null))

|

||||

assert(e.getCause.getMessage contains "Dangling meta character '*' near index 0\n*\n^")

|

||||

}

|

||||

}

|

||||

|

||||

|

||||

Loading…

Reference in New Issue

Block a user