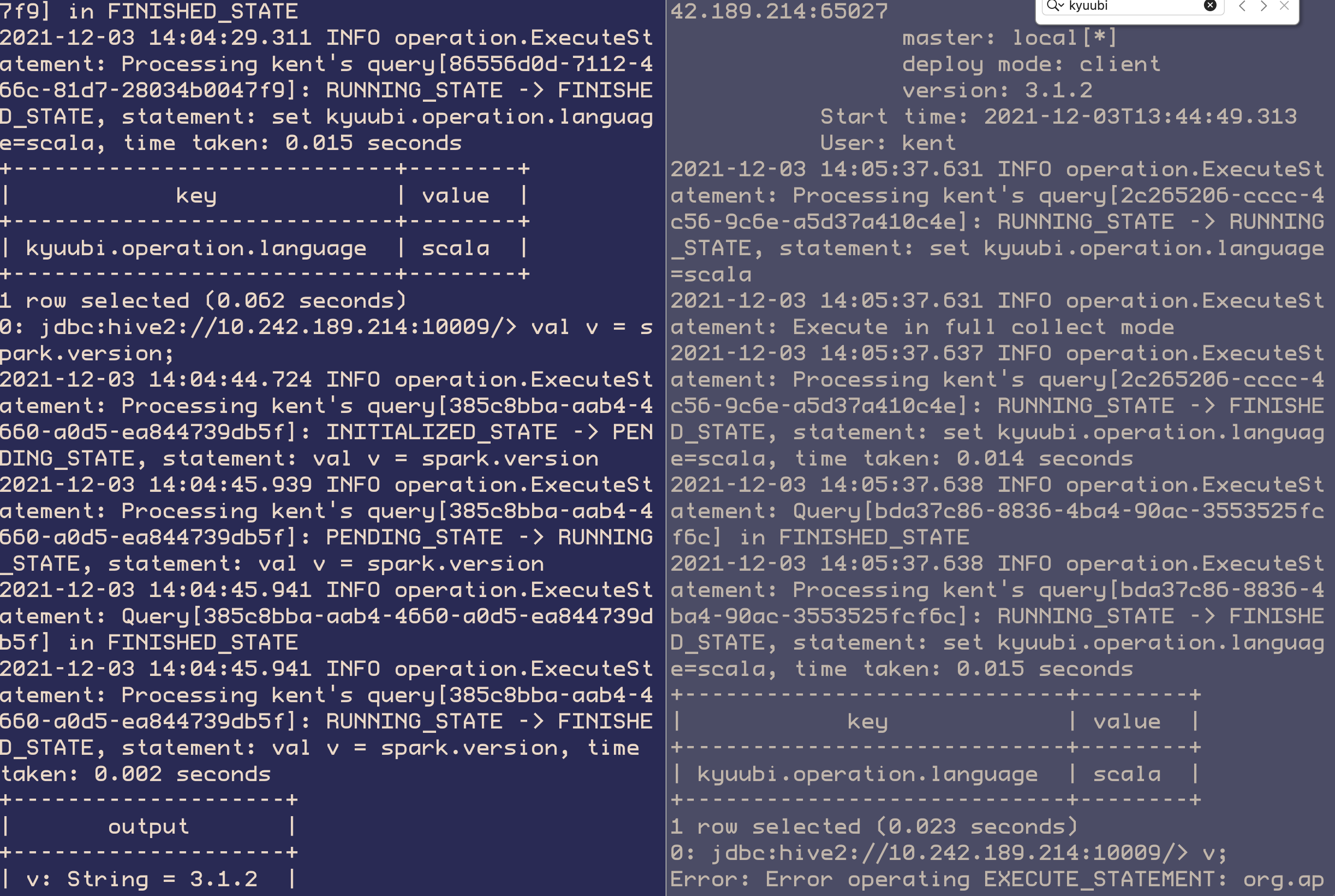

<!-- Thanks for sending a pull request! Here are some tips for you: 1. If this is your first time, please read our contributor guidelines: https://kyuubi.readthedocs.io/en/latest/community/contributions.html 2. If the PR is related to an issue in https://github.com/apache/incubator-kyuubi/issues, add '[KYUUBI #XXXX]' in your PR title, e.g., '[KYUUBI #XXXX] Your PR title ...'. 3. If the PR is unfinished, add '[WIP]' in your PR title, e.g., '[WIP][KYUUBI #XXXX] Your PR title ...'. --> ### _Why are the changes needed?_ <!-- Please clarify why the changes are needed. For instance, 1. If you add a feature, you can talk about the use case of it. 2. If you fix a bug, you can clarify why it is a bug. --> Introduce the basic framework for running scala, see #1490 for the detail ### _How was this patch tested?_ - [x] Add some test cases that check the changes thoroughly including negative and positive cases if possible - [x] Add screenshots for manual tests if appropriate ``` Beeline version 1.5.0-SNAPSHOT by Apache Kyuubi (Incubating) 0: jdbc:hive2://10.242.189.214:10009/> spark.version; 2021-12-03 13:47:07.556 INFO operation.ExecuteStatement: Processing kent's query[08b8b6da-d434-4296-b613-2027e3518441]: INITIALIZED_STATE -> PENDING_STATE, statement: spark.version 2021-12-03 13:47:07.560 INFO operation.ExecuteStatement: Processing kent's query[08b8b6da-d434-4296-b613-2027e3518441]: PENDING_STATE -> RUNNING_STATE, statement: spark.version 2021-12-03 13:47:07.558 INFO operation.ExecuteStatement: Processing kent's query[321dc15d-68d0-4f91-9216-1e08f09842df]: INITIALIZED_STATE -> PENDING_STATE, statement: spark.version 2021-12-03 13:47:07.559 INFO operation.ExecuteStatement: Processing kent's query[321dc15d-68d0-4f91-9216-1e08f09842df]: PENDING_STATE -> RUNNING_STATE, statement: spark.version 2021-12-03 13:47:07.560 INFO operation.ExecuteStatement: Spark application name: kyuubi_USER_SPARK_SQL_kent_default_61cff9fb-7035-4435-b509-80c1730876ed application ID: local-1638510289918 application web UI: http://10.242.189.214:65027 master: local[*] deploy mode: client version: 3.1.2 Start time: 2021-12-03T13:44:49.313 User: kent 2021-12-03 13:47:07.562 INFO scheduler.DAGScheduler: Asked to cancel job group 321dc15d-68d0-4f91-9216-1e08f09842df 2021-12-03 13:47:07.565 INFO operation.ExecuteStatement: Processing kent's query[321dc15d-68d0-4f91-9216-1e08f09842df]: RUNNING_STATE -> ERROR_STATE, statement: spark.version, time taken: 0.006 seconds 2021-12-03 13:47:07.565 ERROR operation.ExecuteStatement: Error operating EXECUTE_STATEMENT: org.apache.spark.sql.catalyst.parser.ParseException: mismatched input 'spark' expecting {'(', 'ADD', 'ALTER', 'ANALYZE', 'CACHE', 'CLEAR', 'COMMENT', 'COMMIT', 'CREATE', 'DELETE', 'DESC', 'DESCRIBE', 'DFS', 'DROP', 'EXPLAIN', 'EXPORT', 'FROM', 'GRANT', 'IMPORT', 'INSERT', 'LIST', 'LOAD', 'LOCK', 'MAP', 'MERGE', 'MSCK', 'REDUCE', 'REFRESH', 'REPLACE', 'RESET', 'REVOKE', 'ROLLBACK', 'SELECT', 'SET', 'SHOW', 'START', 'TABLE', 'TRUNCATE', 'UNCACHE', 'UNLOCK', 'UPDATE', 'USE', 'VALUES', 'WITH'}(line 1, pos 0) == SQL == spark.version ^^^ at org.apache.spark.sql.catalyst.parser.ParseException.withCommand(ParseDriver.scala:255) at org.apache.spark.sql.catalyst.parser.AbstractSqlParser.parse(ParseDriver.scala:124) at org.apache.spark.sql.execution.SparkSqlParser.parse(SparkSqlParser.scala:49) at org.apache.spark.sql.catalyst.parser.AbstractSqlParser.parsePlan(ParseDriver.scala:75) at org.apache.spark.sql.SparkSession.$anonfun$sql$2(SparkSession.scala:616) at org.apache.spark.sql.catalyst.QueryPlanningTracker.measurePhase(QueryPlanningTracker.scala:111) at org.apache.spark.sql.SparkSession.$anonfun$sql$1(SparkSession.scala:616) at org.apache.spark.sql.SparkSession.withActive(SparkSession.scala:775) at org.apache.spark.sql.SparkSession.sql(SparkSession.scala:613) at org.apache.kyuubi.engine.spark.operation.ExecuteStatement.$anonfun$executeStatement$1(ExecuteStatement.scala:98) at scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.java:23) at org.apache.kyuubi.engine.spark.operation.ExecuteStatement.withLocalProperties(ExecuteStatement.scala:157) at org.apache.kyuubi.engine.spark.operation.ExecuteStatement.org$apache$kyuubi$engine$spark$operation$ExecuteStatement$$executeStatement(ExecuteStatement.scala:92) at org.apache.kyuubi.engine.spark.operation.ExecuteStatement$$anon$1.run(ExecuteStatement.scala:125) at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511) at java.util.concurrent.FutureTask.run(FutureTask.java:266) at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149) at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624) at java.lang.Thread.run(Thread.java:748) org.apache.spark.sql.catalyst.parser.ParseException: mismatched input 'spark' expecting {'(', 'ADD', 'ALTER', 'ANALYZE', 'CACHE', 'CLEAR', 'COMMENT', 'COMMIT', 'CREATE', 'DELETE', 'DESC', 'DESCRIBE', 'DFS', 'DROP', 'EXPLAIN', 'EXPORT', 'FROM', 'GRANT', 'IMPORT', 'INSERT', 'LIST', 'LOAD', 'LOCK', 'MAP', 'MERGE', 'MSCK', 'REDUCE', 'REFRESH', 'REPLACE', 'RESET', 'REVOKE', 'ROLLBACK', 'SELECT', 'SET', 'SHOW', 'START', 'TABLE', 'TRUNCATE', 'UNCACHE', 'UNLOCK', 'UPDATE', 'USE', 'VALUES', 'WITH'}(line 1, pos 0) == SQL == spark.version ^^^ at org.apache.spark.sql.catalyst.parser.ParseException.withCommand(ParseDriver.scala:255) at org.apache.spark.sql.catalyst.parser.AbstractSqlParser.parse(ParseDriver.scala:124) at org.apache.spark.sql.execution.SparkSqlParser.parse(SparkSqlParser.scala:49) at org.apache.spark.sql.catalyst.parser.AbstractSqlParser.parsePlan(ParseDriver.scala:75) at org.apache.spark.sql.SparkSession.$anonfun$sql$2(SparkSession.scala:616) at org.apache.spark.sql.catalyst.QueryPlanningTracker.measurePhase(QueryPlanningTracker.scala:111) at org.apache.spark.sql.SparkSession.$anonfun$sql$1(SparkSession.scala:616) at org.apache.spark.sql.SparkSession.withActive(SparkSession.scala:775) at org.apache.spark.sql.SparkSession.sql(SparkSession.scala:613) at org.apache.kyuubi.engine.spark.operation.ExecuteStatement.$anonfun$executeStatement$1(ExecuteStatement.scala:98) at scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.java:23) at org.apache.kyuubi.engine.spark.operation.ExecuteStatement.withLocalProperties(ExecuteStatement.scala:157) at org.apache.kyuubi.engine.spark.operation.ExecuteStatement.org$apache$kyuubi$engine$spark$operation$ExecuteStatement$$executeStatement(ExecuteStatement.scala:92) at org.apache.kyuubi.engine.spark.operation.ExecuteStatement$$anon$1.run(ExecuteStatement.scala:125) at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511) at java.util.concurrent.FutureTask.run(FutureTask.java:266) at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149) at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624) at java.lang.Thread.run(Thread.java:748) 2021-12-03 13:47:07.569 INFO operation.ExecuteStatement: Query[08b8b6da-d434-4296-b613-2027e3518441] in ERROR_STATE 2021-12-03 13:47:07.569 INFO operation.ExecuteStatement: Processing kent's query[08b8b6da-d434-4296-b613-2027e3518441]: RUNNING_STATE -> ERROR_STATE, statement: spark.version, time taken: 0.009 seconds Error: Error operating EXECUTE_STATEMENT: org.apache.spark.sql.catalyst.parser.ParseException: mismatched input 'spark' expecting {'(', 'ADD', 'ALTER', 'ANALYZE', 'CACHE', 'CLEAR', 'COMMENT', 'COMMIT', 'CREATE', 'DELETE', 'DESC', 'DESCRIBE', 'DFS', 'DROP', 'EXPLAIN', 'EXPORT', 'FROM', 'GRANT', 'IMPORT', 'INSERT', 'LIST', 'LOAD', 'LOCK', 'MAP', 'MERGE', 'MSCK', 'REDUCE', 'REFRESH', 'REPLACE', 'RESET', 'REVOKE', 'ROLLBACK', 'SELECT', 'SET', 'SHOW', 'START', 'TABLE', 'TRUNCATE', 'UNCACHE', 'UNLOCK', 'UPDATE', 'USE', 'VALUES', 'WITH'}(line 1, pos 0) == SQL == spark.version ^^^ at org.apache.spark.sql.catalyst.parser.ParseException.withCommand(ParseDriver.scala:255) at org.apache.spark.sql.catalyst.parser.AbstractSqlParser.parse(ParseDriver.scala:124) at org.apache.spark.sql.execution.SparkSqlParser.parse(SparkSqlParser.scala:49) at org.apache.spark.sql.catalyst.parser.AbstractSqlParser.parsePlan(ParseDriver.scala:75) at org.apache.spark.sql.SparkSession.$anonfun$sql$2(SparkSession.scala:616) at org.apache.spark.sql.catalyst.QueryPlanningTracker.measurePhase(QueryPlanningTracker.scala:111) at org.apache.spark.sql.SparkSession.$anonfun$sql$1(SparkSession.scala:616) at org.apache.spark.sql.SparkSession.withActive(SparkSession.scala:775) at org.apache.spark.sql.SparkSession.sql(SparkSession.scala:613) at org.apache.kyuubi.engine.spark.operation.ExecuteStatement.$anonfun$executeStatement$1(ExecuteStatement.scala:98) at scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.java:23) at org.apache.kyuubi.engine.spark.operation.ExecuteStatement.withLocalProperties(ExecuteStatement.scala:157) at org.apache.kyuubi.engine.spark.operation.ExecuteStatement.org$apache$kyuubi$engine$spark$operation$ExecuteStatement$$executeStatement(ExecuteStatement.scala:92) at org.apache.kyuubi.engine.spark.operation.ExecuteStatement$$anon$1.run(ExecuteStatement.scala:125) at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511) at java.util.concurrent.FutureTask.run(FutureTask.java:266) at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149) at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624) at java.lang.Thread.run(Thread.java:748) (state=,code=0) 0: jdbc:hive2://10.242.189.214:10009/> set kyuubi.operation.language=scala; 2021-12-03 13:47:11.982 INFO operation.ExecuteStatement: Processing kent's query[e4e4dc55-4e05-460e-9d9d-86b2cf5c054b]: INITIALIZED_STATE -> PENDING_STATE, statement: set kyuubi.operation.language=scala 2021-12-03 13:47:11.985 INFO operation.ExecuteStatement: Processing kent's query[e4e4dc55-4e05-460e-9d9d-86b2cf5c054b]: PENDING_STATE -> RUNNING_STATE, statement: set kyuubi.operation.language=scala 2021-12-03 13:47:11.983 INFO operation.ExecuteStatement: Processing kent's query[2b6ec68c-2ae9-4d93-a35b-9e2319df4d4f]: INITIALIZED_STATE -> PENDING_STATE, statement: set kyuubi.operation.language=scala 2021-12-03 13:47:11.984 INFO operation.ExecuteStatement: Processing kent's query[2b6ec68c-2ae9-4d93-a35b-9e2319df4d4f]: PENDING_STATE -> RUNNING_STATE, statement: set kyuubi.operation.language=scala 2021-12-03 13:47:11.985 INFO operation.ExecuteStatement: Spark application name: kyuubi_USER_SPARK_SQL_kent_default_61cff9fb-7035-4435-b509-80c1730876ed application ID: local-1638510289918 application web UI: http://10.242.189.214:65027 master: local[*] deploy mode: client version: 3.1.2 Start time: 2021-12-03T13:44:49.313 User: kent 2021-12-03 13:47:11.995 INFO operation.ExecuteStatement: Processing kent's query[2b6ec68c-2ae9-4d93-a35b-9e2319df4d4f]: RUNNING_STATE -> RUNNING_STATE, statement: set kyuubi.operation.language=scala 2021-12-03 13:47:11.995 INFO operation.ExecuteStatement: Execute in full collect mode 2021-12-03 13:47:12.006 INFO operation.ExecuteStatement: Processing kent's query[2b6ec68c-2ae9-4d93-a35b-9e2319df4d4f]: RUNNING_STATE -> FINISHED_STATE, statement: set kyuubi.operation.language=scala, time taken: 0.022 seconds 2021-12-03 13:47:12.007 INFO operation.ExecuteStatement: Query[e4e4dc55-4e05-460e-9d9d-86b2cf5c054b] in FINISHED_STATE 2021-12-03 13:47:12.007 INFO operation.ExecuteStatement: Processing kent's query[e4e4dc55-4e05-460e-9d9d-86b2cf5c054b]: RUNNING_STATE -> FINISHED_STATE, statement: set kyuubi.operation.language=scala, time taken: 0.022 seconds +----------------------------+--------+ | key | value | +----------------------------+--------+ | kyuubi.operation.language | scala | +----------------------------+--------+ 1 row selected (0.052 seconds) 0: jdbc:hive2://10.242.189.214:10009/> spark.version; 2021-12-03 13:47:13.685 INFO operation.ExecuteStatement: Processing kent's query[178ff72d-b870-44d4-99d8-08a0fa0e8efa]: INITIALIZED_STATE -> PENDING_STATE, statement: spark.version 2021-12-03 13:47:15.541 INFO operation.ExecuteStatement: Processing kent's query[178ff72d-b870-44d4-99d8-08a0fa0e8efa]: PENDING_STATE -> RUNNING_STATE, statement: spark.version 2021-12-03 13:47:15.543 INFO operation.ExecuteStatement: Query[178ff72d-b870-44d4-99d8-08a0fa0e8efa] in FINISHED_STATE 2021-12-03 13:47:15.544 INFO operation.ExecuteStatement: Processing kent's query[178ff72d-b870-44d4-99d8-08a0fa0e8efa]: RUNNING_STATE -> FINISHED_STATE, statement: spark.version, time taken: 0.003 seconds +-----------------------+ | output | +-----------------------+ | res0: String = 3.1.2 | +-----------------------+ 1 row selected (1.871 seconds) 0: jdbc:hive2://10.242.189.214:10009/> spark.sql("select current_date()") . . . . . . . . . . . . . . . . . . .> ; 2021-12-03 13:47:36.512 INFO operation.ExecuteStatement: Processing kent's query[602c2d88-7e8a-4175-9b53-e34adfff007a]: INITIALIZED_STATE -> PENDING_STATE, statement: spark.sql("select current_date()") 2021-12-03 13:47:36.689 INFO operation.ExecuteStatement: Processing kent's query[602c2d88-7e8a-4175-9b53-e34adfff007a]: PENDING_STATE -> RUNNING_STATE, statement: spark.sql("select current_date()") 2021-12-03 13:47:36.692 INFO operation.ExecuteStatement: Query[602c2d88-7e8a-4175-9b53-e34adfff007a] in FINISHED_STATE 2021-12-03 13:47:36.692 INFO operation.ExecuteStatement: Processing kent's query[602c2d88-7e8a-4175-9b53-e34adfff007a]: RUNNING_STATE -> FINISHED_STATE, statement: spark.sql("select current_date()"), time taken: 0.003 seconds +----------------------------------------------------+ | output | +----------------------------------------------------+ | res1: org.apache.spark.sql.DataFrame = [current_date(): date] | +----------------------------------------------------+ 1 row selected (0.187 seconds) 0: jdbc:hive2://10.242.189.214:10009/> results += spark.range(1, 5, 2, 3); Error: Error operating EXECUTE_STATEMENT: org.apache.kyuubi.KyuubiSQLException: Interpret error: results += spark.range(1, 5, 2, 3) <console>:26: error: type mismatch; found : org.apache.spark.sql.Dataset[Long] required: org.apache.spark.sql.Dataset[org.apache.spark.sql.Row] results += spark.range(1, 5, 2, 3) ^ at org.apache.kyuubi.KyuubiSQLException$.apply(KyuubiSQLException.scala:69) at org.apache.kyuubi.engine.spark.operation.ExecuteScala.runInternal(ExecuteScala.scala:70) at org.apache.kyuubi.operation.AbstractOperation.run(AbstractOperation.scala:130) at org.apache.kyuubi.session.AbstractSession.runOperation(AbstractSession.scala:93) at org.apache.kyuubi.engine.spark.session.SparkSessionImpl.runOperation(SparkSessionImpl.scala:62) at org.apache.kyuubi.session.AbstractSession.$anonfun$executeStatement$1(AbstractSession.scala:121) at org.apache.kyuubi.session.AbstractSession.withAcquireRelease(AbstractSession.scala:75) at org.apache.kyuubi.session.AbstractSession.executeStatement(AbstractSession.scala:118) at org.apache.kyuubi.service.AbstractBackendService.executeStatement(AbstractBackendService.scala:61) at org.apache.kyuubi.service.ThriftBinaryFrontendService.ExecuteStatement(ThriftBinaryFrontendService.scala:265) at org.apache.hive.service.rpc.thrift.TCLIService$Processor$ExecuteStatement.getResult(TCLIService.java:1557) at org.apache.hive.service.rpc.thrift.TCLIService$Processor$ExecuteStatement.getResult(TCLIService.java:1542) at org.apache.thrift.ProcessFunction.process(ProcessFunction.java:38) at org.apache.thrift.TBaseProcessor.process(TBaseProcessor.java:39) at org.apache.kyuubi.service.authentication.TSetIpAddressProcessor.process(TSetIpAddressProcessor.scala:36) at org.apache.thrift.server.TThreadPoolServer$WorkerProcess.run(TThreadPoolServer.java:310) at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149) at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624) at java.lang.Thread.run(Thread.java:748) (state=,code=0) 0: jdbc:hive2://10.242.189.214:10009/> results += spark.range(1, 5, 2, 3).toDF; 2021-12-03 13:48:30.327 INFO operation.ExecuteStatement: Processing kent's query[daccd163-978a-4d66-af85-af466086d1db]: INITIALIZED_STATE -> PENDING_STATE, statement: results += spark.range(1, 5, 2, 3).toDF 2021-12-03 13:48:31.700 INFO operation.ExecuteStatement: Processing kent's query[daccd163-978a-4d66-af85-af466086d1db]: PENDING_STATE -> RUNNING_STATE, statement: results += spark.range(1, 5, 2, 3).toDF 2021-12-03 13:48:31.702 INFO operation.ExecuteStatement: Query[daccd163-978a-4d66-af85-af466086d1db] in FINISHED_STATE 2021-12-03 13:48:31.702 INFO operation.ExecuteStatement: Processing kent's query[daccd163-978a-4d66-af85-af466086d1db]: RUNNING_STATE -> FINISHED_STATE, statement: results += spark.range(1, 5, 2, 3).toDF, time taken: 0.003 seconds +-----+ | id | +-----+ | 1 | | 3 | +-----+ 2 rows selected (1.387 seconds) ``` ** Session level isolated **  - [x] [Run test](https://kyuubi.readthedocs.io/en/latest/develop_tools/testing.html#running-tests) locally before make a pull request Closes #1491 from yaooqinn/scala. Closes #1490 af4d0a13 [Kent Yao] Merge branch 'master' into scala 2ebdf6a3 [Kent Yao] provided scala dep d58b2bfe [Kent Yao] [KYUUBI #1490] Introduce the basic framework for running scala d256bfde [Kent Yao] [KYUUBI #1490] Introduce the basic framework for running scala 52090434 [Kent Yao] init Authored-by: Kent Yao <yao@apache.org> Signed-off-by: Kent Yao <yao@apache.org>

231 lines

8.4 KiB

XML

231 lines

8.4 KiB

XML

<?xml version="1.0" encoding="UTF-8"?>

|

|

<!--

|

|

~ Licensed to the Apache Software Foundation (ASF) under one or more

|

|

~ contributor license agreements. See the NOTICE file distributed with

|

|

~ this work for additional information regarding copyright ownership.

|

|

~ The ASF licenses this file to You under the Apache License, Version 2.0

|

|

~ (the "License"); you may not use this file except in compliance with

|

|

~ the License. You may obtain a copy of the License at

|

|

~

|

|

~ http://www.apache.org/licenses/LICENSE-2.0

|

|

~

|

|

~ Unless required by applicable law or agreed to in writing, software

|

|

~ distributed under the License is distributed on an "AS IS" BASIS,

|

|

~ WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

|

~ See the License for the specific language governing permissions and

|

|

~ limitations under the License.

|

|

-->

|

|

|

|

<project xmlns="http://maven.apache.org/POM/4.0.0"

|

|

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

|

|

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

|

|

<parent>

|

|

<groupId>org.apache.kyuubi</groupId>

|

|

<artifactId>kyuubi-parent</artifactId>

|

|

<version>1.5.0-SNAPSHOT</version>

|

|

<relativePath>../../pom.xml</relativePath>

|

|

</parent>

|

|

<modelVersion>4.0.0</modelVersion>

|

|

|

|

<artifactId>kyuubi-spark-sql-engine_2.12</artifactId>

|

|

<name>Kyuubi Project Engine Spark SQL</name>

|

|

<packaging>jar</packaging>

|

|

|

|

<dependencies>

|

|

<dependency>

|

|

<groupId>org.apache.kyuubi</groupId>

|

|

<artifactId>kyuubi-common_${scala.binary.version}</artifactId>

|

|

<version>${project.version}</version>

|

|

</dependency>

|

|

|

|

<dependency>

|

|

<groupId>org.apache.kyuubi</groupId>

|

|

<artifactId>kyuubi-ha_${scala.binary.version}</artifactId>

|

|

<version>${project.version}</version>

|

|

</dependency>

|

|

|

|

<dependency>

|

|

<groupId>org.apache.spark</groupId>

|

|

<artifactId>spark-sql_${scala.binary.version}</artifactId>

|

|

<scope>provided</scope>

|

|

</dependency>

|

|

|

|

<dependency>

|

|

<groupId>org.apache.spark</groupId>

|

|

<artifactId>spark-repl_${scala.binary.version}</artifactId>

|

|

<scope>provided</scope>

|

|

</dependency>

|

|

|

|

<dependency>

|

|

<groupId>org.scala-lang</groupId>

|

|

<artifactId>scala-compiler</artifactId>

|

|

<scope>provided</scope>

|

|

</dependency>

|

|

|

|

<dependency>

|

|

<groupId>org.scala-lang</groupId>

|

|

<artifactId>scala-reflect</artifactId>

|

|

<scope>provided</scope>

|

|

</dependency>

|

|

|

|

<dependency>

|

|

<groupId>org.apache.hadoop</groupId>

|

|

<artifactId>hadoop-client-api</artifactId>

|

|

<scope>provided</scope>

|

|

</dependency>

|

|

|

|

<dependency>

|

|

<groupId>org.apache.kyuubi</groupId>

|

|

<artifactId>kyuubi-common_${scala.binary.version}</artifactId>

|

|

<version>${project.version}</version>

|

|

<type>test-jar</type>

|

|

<scope>test</scope>

|

|

</dependency>

|

|

|

|

<!--

|

|

Spark requires `commons-collections` and `commons-io` but got them from transitive

|

|

dependencies of `hadoop-client`. As we are using Hadoop Shaded Client, we need add

|

|

them explicitly. See more details at SPARK-33212.

|

|

-->

|

|

<dependency>

|

|

<groupId>commons-collections</groupId>

|

|

<artifactId>commons-collections</artifactId>

|

|

<scope>test</scope>

|

|

</dependency>

|

|

|

|

<dependency>

|

|

<groupId>commons-io</groupId>

|

|

<artifactId>commons-io</artifactId>

|

|

<scope>test</scope>

|

|

</dependency>

|

|

|

|

<dependency>

|

|

<groupId>org.apache.spark</groupId>

|

|

<artifactId>spark-hive_${scala.binary.version}</artifactId>

|

|

<scope>test</scope>

|

|

</dependency>

|

|

|

|

<dependency>

|

|

<groupId>org.apache.kyuubi</groupId>

|

|

<artifactId>kyuubi-hive-jdbc-shaded</artifactId>

|

|

<version>${project.version}</version>

|

|

<scope>test</scope>

|

|

</dependency>

|

|

|

|

<dependency>

|

|

<groupId>org.apache.hadoop</groupId>

|

|

<artifactId>hadoop-client-runtime</artifactId>

|

|

<scope>test</scope>

|

|

</dependency>

|

|

|

|

<dependency>

|

|

<groupId>org.slf4j</groupId>

|

|

<artifactId>jul-to-slf4j</artifactId>

|

|

<scope>test</scope>

|

|

</dependency>

|

|

|

|

<dependency>

|

|

<groupId>org.apache.iceberg</groupId>

|

|

<artifactId>${iceberg.name}</artifactId>

|

|

<scope>test</scope>

|

|

</dependency>

|

|

|

|

<dependency>

|

|

<groupId>org.apache.spark</groupId>

|

|

<artifactId>spark-avro_${scala.binary.version}</artifactId>

|

|

<scope>test</scope>

|

|

</dependency>

|

|

|

|

<dependency>

|

|

<groupId>org.apache.hudi</groupId>

|

|

<artifactId>hudi-spark-common_${scala.binary.version}</artifactId>

|

|

<scope>test</scope>

|

|

</dependency>

|

|

|

|

<dependency>

|

|

<groupId>org.apache.hudi</groupId>

|

|

<artifactId>hudi-spark3_${scala.binary.version}</artifactId>

|

|

<scope>test</scope>

|

|

</dependency>

|

|

|

|

<dependency>

|

|

<groupId>org.apache.parquet</groupId>

|

|

<artifactId>parquet-avro</artifactId>

|

|

<scope>test</scope>

|

|

</dependency>

|

|

|

|

<dependency>

|

|

<groupId>org.apache.hudi</groupId>

|

|

<artifactId>hudi-spark_${scala.binary.version}</artifactId>

|

|

<scope>test</scope>

|

|

</dependency>

|

|

|

|

<dependency>

|

|

<groupId>io.delta</groupId>

|

|

<artifactId>delta-core_${scala.binary.version}</artifactId>

|

|

<scope>test</scope>

|

|

</dependency>

|

|

|

|

<dependency>

|

|

<groupId>org.apache.kyuubi</groupId>

|

|

<artifactId>kyuubi-zookeeper_${scala.binary.version}</artifactId>

|

|

<version>${project.version}</version>

|

|

<scope>test</scope>

|

|

</dependency>

|

|

</dependencies>

|

|

|

|

<build>

|

|

<outputDirectory>target/scala-${scala.binary.version}/classes</outputDirectory>

|

|

<testOutputDirectory>target/scala-${scala.binary.version}/test-classes</testOutputDirectory>

|

|

<plugins>

|

|

<plugin>

|

|

<groupId>org.apache.maven.plugins</groupId>

|

|

<artifactId>maven-shade-plugin</artifactId>

|

|

<configuration>

|

|

<shadedArtifactAttached>false</shadedArtifactAttached>

|

|

<artifactSet>

|

|

<includes>

|

|

<include>org.apache.kyuubi:kyuubi-common_${scala.binary.version}</include>

|

|

<include>org.apache.kyuubi:kyuubi-ha_${scala.binary.version}</include>

|

|

<include>org.apache.curator:curator-client</include>

|

|

<include>org.apache.curator:curator-framework</include>

|

|

<include>org.apache.curator:curator-recipes</include>

|

|

</includes>

|

|

</artifactSet>

|

|

<relocations>

|

|

<relocation>

|

|

<pattern>org.apache.curator</pattern>

|

|

<shadedPattern>${kyuubi.shade.packageName}.org.apache.curator</shadedPattern>

|

|

<includes>

|

|

<include>org.apache.curator.**</include>

|

|

</includes>

|

|

</relocation>

|

|

</relocations>

|

|

</configuration>

|

|

<executions>

|

|

<execution>

|

|

<phase>package</phase>

|

|

<goals>

|

|

<goal>shade</goal>

|

|

</goals>

|

|

</execution>

|

|

</executions>

|

|

</plugin>

|

|

|

|

<plugin>

|

|

<groupId>org.apache.maven.plugins</groupId>

|

|

<artifactId>maven-jar-plugin</artifactId>

|

|

<executions>

|

|

<execution>

|

|

<id>prepare-test-jar</id>

|

|

<phase>test-compile</phase>

|

|

<goals>

|

|

<goal>test-jar</goal>

|

|

</goals>

|

|

</execution>

|

|

</executions>

|

|

</plugin>

|

|

</plugins>

|

|

</build>

|

|

</project>

|