[KYUUBI #1008][FOLLOWUP] No need to send private token from Kyuubi server to engine

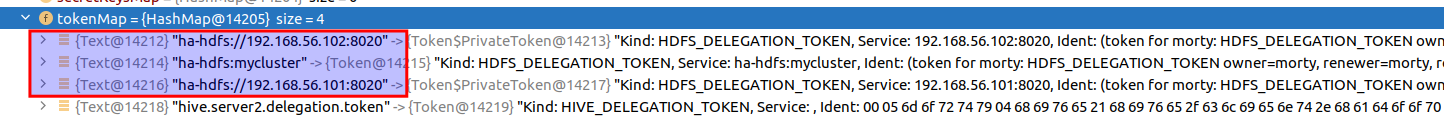

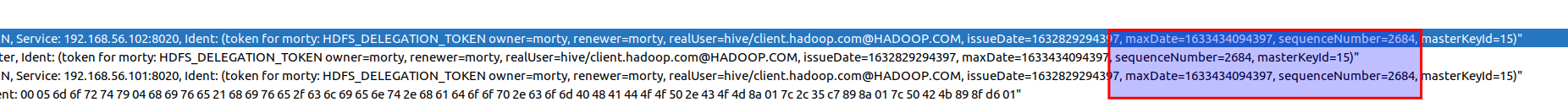

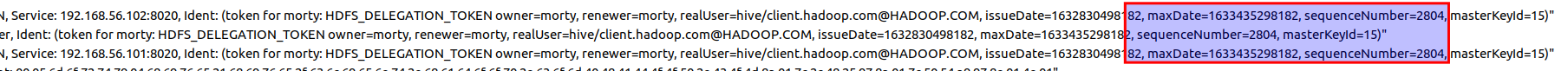

### _Why are the changes needed?_ When communicating with NameNode HA, private tokens are created from original token:  In above image, "ha-hdfs:mycluster" is the original token. "ha-hdfs://192.168.56.101:8020" and "ha-hdfs://192.168.56.102:8020" are private tokens. `KyuubiHadoopUtils.getCredentialsInternal` was supposed to extract these private tokens at Kyuubi server side and send them to SQL engine. But in fact, SQL engine side private tokens are automatically created when adding original token, inside `org.apache.hadoop.security.Credentials#addToken`. ### _How was this patch tested?_ - [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible - [x] Add screenshots for manual tests if appropriate SequenceNumber of private tokens always equal to original token:   - [x] [Run test](https://kyuubi.readthedocs.io/en/latest/develop_tools/testing.html#running-tests) locally before make a pull request Closes #1173 from zhouyifan279/#1008. Closes #1008 891dc2b6 [zhouyifan279] [KYUUBI #1008][FOLLOWUP] No need to send private token from Kyuubi server to engine Authored-by: zhouyifan279 <zhouyifan279@gmail.com> Signed-off-by: Kent Yao <yao@apache.org>

This commit is contained in:

parent

d332534325

commit

d44ccc3644

@ -48,7 +48,7 @@ class SparkThriftBinaryFrontendService(

|

||||

KyuubiHadoopUtils.getTokenMap(newCreds).partition(_._2.getKind == HIVE_DELEGATION_TOKEN)

|

||||

|

||||

val updateCreds = new Credentials()

|

||||

val oldCreds = KyuubiHadoopUtils.getCredentialsInternal(UserGroupInformation.getCurrentUser)

|

||||

val oldCreds = UserGroupInformation.getCurrentUser.getCredentials

|

||||

addHiveToken(hiveTokens, oldCreds, updateCreds)

|

||||

addOtherTokens(otherTokens, oldCreds, updateCreds)

|

||||

if (updateCreds.numberOfTokens() > 0) {

|

||||

|

||||

@ -806,8 +806,7 @@ class SparkOperationSuite extends WithSparkSQLEngine with HiveJDBCTests {

|

||||

creds2.addToken(new Text("HDFS2"), extraHDFSToken)

|

||||

sendCredentials(client, creds2)

|

||||

// SparkSQLEngine's tokens should be updated

|

||||

var engineCredentials =

|

||||

KyuubiHadoopUtils.getCredentialsInternal(UserGroupInformation.getCurrentUser)

|

||||

var engineCredentials = UserGroupInformation.getCurrentUser.getCredentials

|

||||

assert(engineCredentials.getToken(hdfsTokenAlias) == creds2.getToken(hdfsTokenAlias))

|

||||

assert(

|

||||

engineCredentials.getToken(hiveTokenAlias) == creds2.getToken(new Text(metastoreUris)))

|

||||

@ -818,8 +817,7 @@ class SparkOperationSuite extends WithSparkSQLEngine with HiveJDBCTests {

|

||||

val creds3 = createCredentials(currentTime, hdfsTokenAlias.toString, metastoreUris)

|

||||

sendCredentials(client, creds3)

|

||||

// SparkSQLEngine's tokens should not be updated

|

||||

engineCredentials =

|

||||

KyuubiHadoopUtils.getCredentialsInternal(UserGroupInformation.getCurrentUser)

|

||||

engineCredentials = UserGroupInformation.getCurrentUser.getCredentials

|

||||

assert(engineCredentials.getToken(hdfsTokenAlias) == creds2.getToken(hdfsTokenAlias))

|

||||

assert(

|

||||

engineCredentials.getToken(hiveTokenAlias) == creds2.getToken(new Text(metastoreUris)))

|

||||

@ -828,8 +826,7 @@ class SparkOperationSuite extends WithSparkSQLEngine with HiveJDBCTests {

|

||||

val creds4 = createCredentials(currentTime + 2, "HDFS2", "thrift://localhost:9085")

|

||||

sendCredentials(client, creds4)

|

||||

// No token is updated

|

||||

engineCredentials =

|

||||

KyuubiHadoopUtils.getCredentialsInternal(UserGroupInformation.getCurrentUser)

|

||||

engineCredentials = UserGroupInformation.getCurrentUser.getCredentials

|

||||

assert(engineCredentials.getToken(hdfsTokenAlias) == creds2.getToken(hdfsTokenAlias))

|

||||

assert(

|

||||

engineCredentials.getToken(hiveTokenAlias) == creds2.getToken(new Text(metastoreUris)))

|

||||

|

||||

@ -19,7 +19,6 @@ package org.apache.kyuubi.util

|

||||

|

||||

import java.io.{ByteArrayInputStream, ByteArrayOutputStream, DataInputStream, DataOutputStream}

|

||||

import java.util.{Map => JMap}

|

||||

import javax.security.auth.Subject

|

||||

|

||||

import scala.collection.JavaConverters._

|

||||

|

||||

@ -38,12 +37,6 @@ object KyuubiHadoopUtils {

|

||||

classOf[UserGroupInformation].getDeclaredField("subject")

|

||||

subjectField.setAccessible(true)

|

||||

|

||||

private val getCredentialsInternalMethod =

|

||||

classOf[UserGroupInformation].getDeclaredMethod(

|

||||

"getCredentialsInternal",

|

||||

Array.empty[Class[_]]: _*)

|

||||

getCredentialsInternalMethod.setAccessible(true)

|

||||

|

||||

private val tokenMapField =

|

||||

classOf[Credentials].getDeclaredField("tokenMap")

|

||||

tokenMapField.setAccessible(true)

|

||||

@ -76,19 +69,6 @@ object KyuubiHadoopUtils {

|

||||

creds

|

||||

}

|

||||

|

||||

/**

|

||||

* Get all tokens in [[UserGroupInformation#subject]] including

|

||||

* [[org.apache.hadoop.security.token.Token.PrivateToken]] as

|

||||

* [[UserGroupInformation#getCredentials]] returned Credentials do not contain

|

||||

* [[org.apache.hadoop.security.token.Token.PrivateToken]].

|

||||

*/

|

||||

def getCredentialsInternal(ugi: UserGroupInformation): Credentials = {

|

||||

// Synchronize to avoid credentials being written while cloning credentials

|

||||

subjectField.get(ugi).asInstanceOf[Subject] synchronized {

|

||||

new Credentials(getCredentialsInternalMethod.invoke(ugi).asInstanceOf[Credentials])

|

||||

}

|

||||

}

|

||||

|

||||

/**

|

||||

* Get [[Credentials#tokenMap]] by reflection as [[Credentials#getTokenMap]] is not present before

|

||||

* Hadoop 3.2.1.

|

||||

|

||||

Loading…

Reference in New Issue

Block a user