[KYUUBI #1776] Improve EnginePage

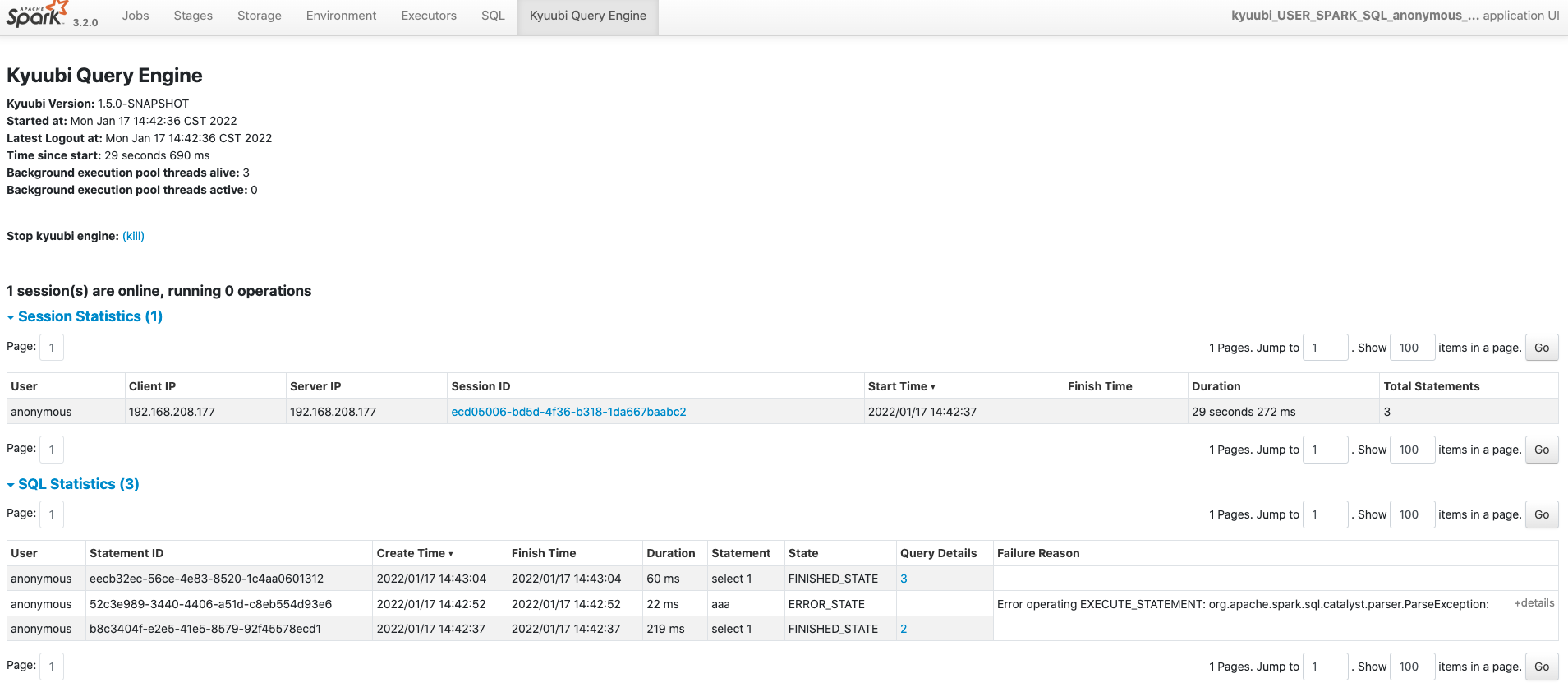

<!-- Thanks for sending a pull request! Here are some tips for you: 1. If this is your first time, please read our contributor guidelines: https://kyuubi.readthedocs.io/en/latest/community/contributions.html 2. If the PR is related to an issue in https://github.com/apache/incubator-kyuubi/issues, add '[KYUUBI #XXXX]' in your PR title, e.g., '[KYUUBI #XXXX] Your PR title ...'. 3. If the PR is unfinished, add '[WIP]' in your PR title, e.g., '[WIP][KYUUBI #XXXX] Your PR title ...'. --> ### _Why are the changes needed?_ <!-- Please clarify why the changes are needed. For instance, 1. If you add a feature, you can talk about the use case of it. 2. If you fix a bug, you can clarify why it is a bug. --> Improve the UI page of `Kyuubi Query Engine`. Currently, the column named `Query Execution` in tab `SQL Statistics` is not readable 1. Replace column `Query Execution` with `Query Details` and point it to the Spark SQL detail page 2. Add a new column `Failure Reason` to display an exception message when the query fails. ### _How was this patch tested?_ - [ ] Add some test cases that check the changes thoroughly including negative and positive cases if possible - [x] Add screenshots for manual tests if appropriate  - [ ] [Run test](https://kyuubi.readthedocs.io/en/latest/develop_tools/testing.html#running-tests) locally before make a pull request Closes #1776 from cfmcgrady/ui. Closes #1776 efab75a7 [Fu Chen] trigger GitHub actions 5b7001b2 [Fu Chen] fix ut d9ccff89 [Fu Chen] fix style 717b916d [Fu Chen] Improve EnginePage Authored-by: Fu Chen <cfmcgrady@gmail.com> Signed-off-by: ulysses-you <ulyssesyou@apache.org>

This commit is contained in:

parent

7dcddafa6c

commit

250d2f17b6

@ -17,7 +17,7 @@

|

||||

|

||||

package org.apache.kyuubi.engine.spark.events

|

||||

|

||||

import org.apache.spark.sql.{DataFrame, Encoders}

|

||||

import org.apache.spark.sql.Encoders

|

||||

import org.apache.spark.sql.types.StructType

|

||||

|

||||

import org.apache.kyuubi.Utils

|

||||

@ -42,7 +42,7 @@ import org.apache.kyuubi.engine.spark.operation.SparkOperation

|

||||

* @param exception: caught exception if have

|

||||

* @param sessionId the identifier of the parent session

|

||||

* @param sessionUser the authenticated client user

|

||||

* @param queryExecution the query execution of this operation

|

||||

* @param executionId the query execution id of this operation

|

||||

*/

|

||||

case class SparkOperationEvent(

|

||||

statementId: String,

|

||||

@ -56,7 +56,7 @@ case class SparkOperationEvent(

|

||||

exception: Option[Throwable],

|

||||

sessionId: String,

|

||||

sessionUser: String,

|

||||

queryExecution: String) extends KyuubiSparkEvent {

|

||||

executionId: Option[Long]) extends KyuubiSparkEvent {

|

||||

|

||||

override def schema: StructType = Encoders.product[SparkOperationEvent].schema

|

||||

override def partitions: Seq[(String, String)] =

|

||||

@ -72,7 +72,9 @@ case class SparkOperationEvent(

|

||||

}

|

||||

|

||||

object SparkOperationEvent {

|

||||

def apply(operation: SparkOperation, result: Option[DataFrame] = None): SparkOperationEvent = {

|

||||

def apply(

|

||||

operation: SparkOperation,

|

||||

executionId: Option[Long] = None): SparkOperationEvent = {

|

||||

val session = operation.getSession

|

||||

val status = operation.getStatus

|

||||

new SparkOperationEvent(

|

||||

@ -87,6 +89,6 @@ object SparkOperationEvent {

|

||||

status.exception,

|

||||

session.handle.identifier.toString,

|

||||

session.user,

|

||||

result.map(_.queryExecution.toString).orNull)

|

||||

executionId)

|

||||

}

|

||||

}

|

||||

|

||||

@ -146,6 +146,7 @@ class ExecuteStatement(

|

||||

|

||||

override def setState(newState: OperationState): Unit = {

|

||||

super.setState(newState)

|

||||

EventLoggingService.onEvent(SparkOperationEvent(this, Option(result)))

|

||||

EventLoggingService.onEvent(

|

||||

SparkOperationEvent(this, operationListener.getExecutionId))

|

||||

}

|

||||

}

|

||||

|

||||

@ -44,6 +44,8 @@ class SQLOperationListener(

|

||||

private val activeStages = new java.util.HashSet[Int]()

|

||||

private var executionId: Option[Long] = None

|

||||

|

||||

def getExecutionId: Option[Long] = executionId

|

||||

|

||||

// For broadcast, Spark will introduce a new runId as SPARK_JOB_GROUP_ID, see:

|

||||

// https://github.com/apache/spark/pull/24595, So we will miss these logs.

|

||||

// TODO: Fix this until the below ticket resolved

|

||||

|

||||

@ -327,7 +327,8 @@ private class StatementStatsPagedTable(

|

||||

("Duration", true, None),

|

||||

("Statement", true, None),

|

||||

("State", true, None),

|

||||

("Query Execution", true, None))

|

||||

("Query Details", true, None),

|

||||

("Failure Reason", true, None))

|

||||

|

||||

headerStatRow(

|

||||

sqlTableHeadersAndTooltips,

|

||||

@ -365,8 +366,19 @@ private class StatementStatsPagedTable(

|

||||

{event.state}

|

||||

</td>

|

||||

<td>

|

||||

{event.queryExecution}

|

||||

{

|

||||

if (event.executionId.isDefined) {

|

||||

<a href={

|

||||

"%s/SQL/execution/?id=%s".format(

|

||||

UIUtils.prependBaseUri(request, parent.basePath),

|

||||

event.executionId.get)

|

||||

}>

|

||||

{event.executionId.get}

|

||||

</a>

|

||||

}

|

||||

}

|

||||

</td>

|

||||

{if (event.exception.isDefined) errorMessageCell(event.exception.get.getMessage)}

|

||||

</tr>

|

||||

}

|

||||

|

||||

@ -447,7 +459,6 @@ private class StatementStatsTableDataSource(

|

||||

case "Duration" => Ordering.by(_.duration)

|

||||

case "Statement" => Ordering.by(_.statement)

|

||||

case "State" => Ordering.by(_.state)

|

||||

case "Query Execution" => Ordering.by(_.queryExecution)

|

||||

case unknownColumn => throw new IllegalArgumentException(s"Unknown column: $unknownColumn")

|

||||

}

|

||||

if (desc) {

|

||||

|

||||

@ -94,7 +94,7 @@ class EngineEventsStoreSuite extends KyuubiFunSuite {

|

||||

None,

|

||||

"sid1",

|

||||

"a",

|

||||

"")

|

||||

None)

|

||||

val s2 = SparkOperationEvent(

|

||||

"ea2",

|

||||

"select 2",

|

||||

@ -107,7 +107,7 @@ class EngineEventsStoreSuite extends KyuubiFunSuite {

|

||||

None,

|

||||

"sid1",

|

||||

"c",

|

||||

"")

|

||||

None)

|

||||

val s3 = SparkOperationEvent(

|

||||

"ea3",

|

||||

"select 3",

|

||||

@ -120,7 +120,7 @@ class EngineEventsStoreSuite extends KyuubiFunSuite {

|

||||

None,

|

||||

"sid1",

|

||||

"b",

|

||||

"")

|

||||

None)

|

||||

|

||||

store.saveStatement(s1)

|

||||

store.saveStatement(s2)

|

||||

@ -149,7 +149,7 @@ class EngineEventsStoreSuite extends KyuubiFunSuite {

|

||||

None,

|

||||

"sid1",

|

||||

"a",

|

||||

"")

|

||||

None)

|

||||

store.saveStatement(s)

|

||||

}

|

||||

|

||||

@ -174,7 +174,7 @@ class EngineEventsStoreSuite extends KyuubiFunSuite {

|

||||

None,

|

||||

"sid1",

|

||||

"a",

|

||||

""))

|

||||

None))

|

||||

store.saveStatement(SparkOperationEvent(

|

||||

"s2",

|

||||

"select 1",

|

||||

@ -187,7 +187,7 @@ class EngineEventsStoreSuite extends KyuubiFunSuite {

|

||||

None,

|

||||

"sid1",

|

||||

"a",

|

||||

""))

|

||||

None))

|

||||

store.saveStatement(SparkOperationEvent(

|

||||

"s3",

|

||||

"select 1",

|

||||

@ -200,7 +200,7 @@ class EngineEventsStoreSuite extends KyuubiFunSuite {

|

||||

None,

|

||||

"sid1",

|

||||

"a",

|

||||

""))

|

||||

None))

|

||||

store.saveStatement(SparkOperationEvent(

|

||||

"s4",

|

||||

"select 1",

|

||||

@ -213,7 +213,7 @@ class EngineEventsStoreSuite extends KyuubiFunSuite {

|

||||

None,

|

||||

"sid1",

|

||||

"a",

|

||||

""))

|

||||

None))

|

||||

|

||||

assert(store.getStatementList.size == 3)

|

||||

assert(store.getStatementList(2).statementId == "s4")

|

||||

|

||||

@ -133,7 +133,7 @@ class EngineTabSuite extends WithSparkSQLEngine with HiveJDBCTestHelper {

|

||||

assert(resp.contains("sqlstat"))

|

||||

|

||||

// check sql stats table title

|

||||

assert(resp.contains("Query Execution"))

|

||||

assert(resp.contains("Query Details"))

|

||||

}

|

||||

}

|

||||

|

||||

|

||||

Loading…

Reference in New Issue

Block a user